Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Please configure Global configurations > Git Accounts to configure Git Repository is using private repo

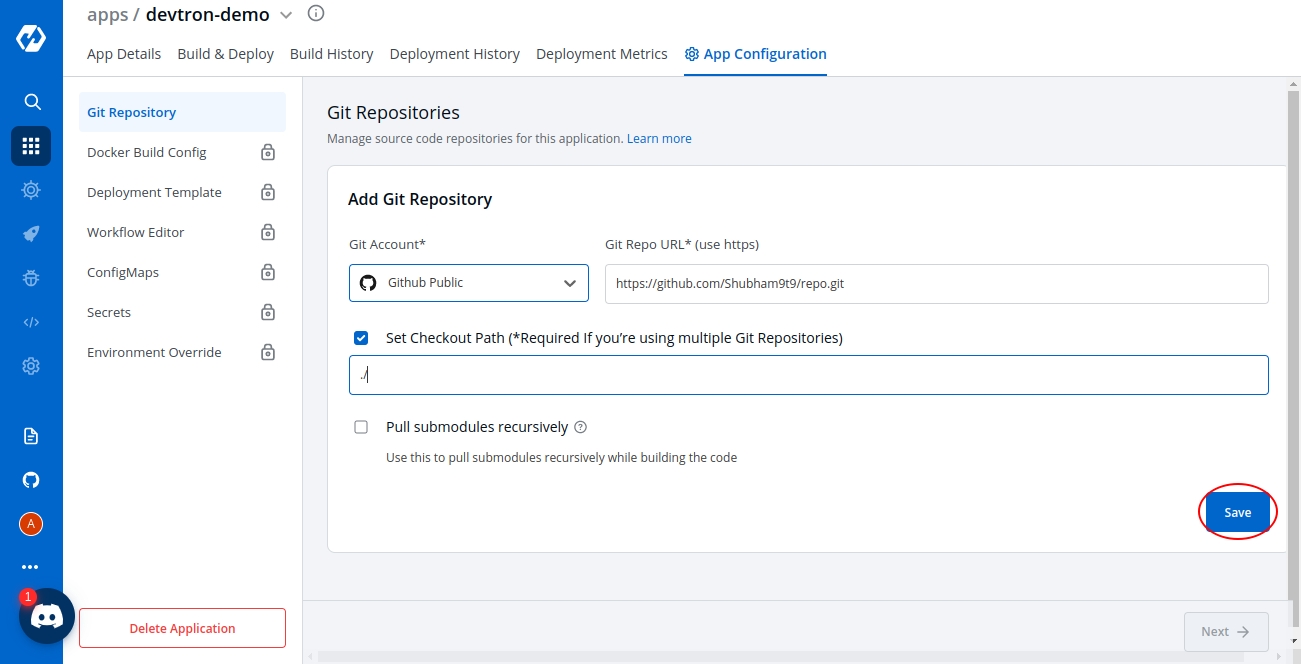

Git Repository is used to pull your application source code during the CI step. Select Git Repository section of the App Configuration. Inside Git Repository when you click on Add Git Repository you will see three options as shown below:

Git Account

Git Repo URL

Checkout Path

Devtron also supports multiple git repositories in a single deployment. We will discuss this in detail in the multi git option below.

In this section, you have to select the git account of your code repository. If the authentication type of the Git account is anonymous, only public git repository will be accessible. If you are using a private git repository, you can configure your git provider via git accounts.

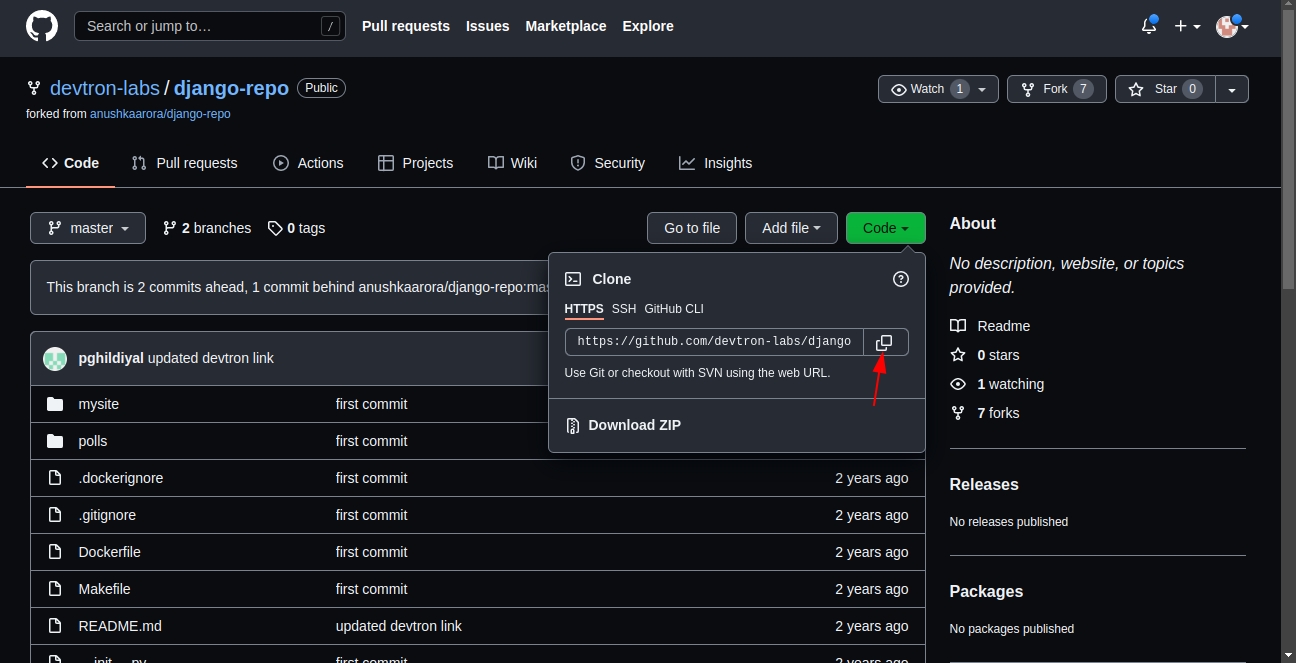

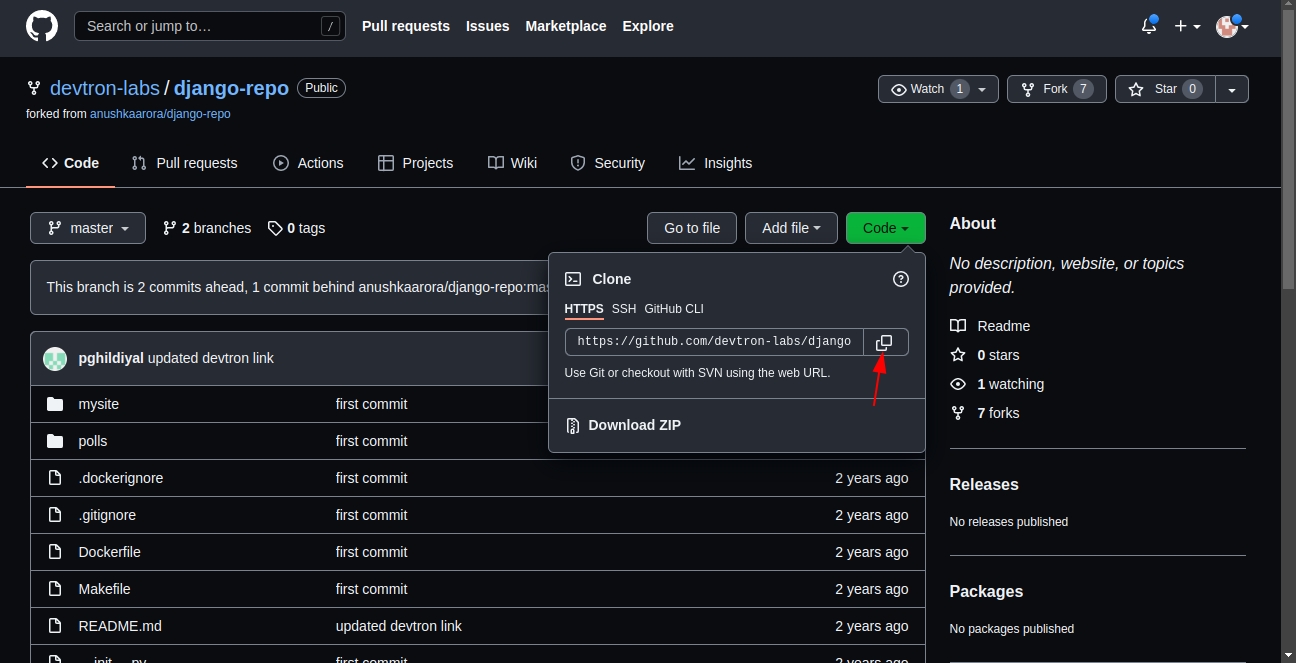

Inside the git repo URL, you have to provide your code repository’s URL. For Example- https://github.com/devtron-labs/django-repo

You can find this URL by clicking on the '⤓ code' button on your git repository page.

Note:

Copy the HTTPS/SSH url of the repository

Please make sure that you've added your dockerfile in the repo.

After clicking on checkbox, git checkout path field appears. The git checkout path is the directory where your code is pulled or cloned for the repository you specified in the previous step.

This field is optional in case of a single git repository application and you can leave the path as default. Devtron assigns a directory by itself when the field is left blank. The default value of this field is ./

If you want to go with a multi-git approach, then you need to specify a separate path for each of your repositories. The first repository can be checked out at the default ./ path as explained above. But, for all the rest of the repositories, you need to ensure that you provide unique checkout paths. In failing to do so, you may cause Devtron to checkout multiple repositories in one directory and overwriting files from different repositories on each other.

This checkbox is optional and is used for pulling git submodules present in a repo. The submodules will be pulled recursively and same auth method which is used for parent repo will be used for submodules.

As we discussed, Devtron also supports multiple git repositories in a single application. To add multiple repositories, click on add repo and repeat steps 1 to 3. Repeat the process for every new git repository you add. Ensure that the checkout paths are unique for each.

Note: Even if you add multiple repositories, only one image will be created based on the docker file as shown in the docker build config.

Let’s look at this with an example:

Due to security reasons, you may want to keep sensitive configurations like third party API keys in a separate access restricted git repositories and the source code in a git repository that every developer has access to. To deploy this application, code from both the repositories is required. A multi-git support will help you to do that.

Few other examples, where you may want to have multiple repositories for your application and will need multi git checkout support:

To make code modularize, you are keeping front-end and back-end code in different repositories.

Common Library extracted out in different repo so that it can be used via multiple other projects.

Due to security reasons you are keeping configuration files in different access restricted git repositories.

The checkout path is used by Devtron to assign a directory to each of your git repositories. Once you provide different checkout paths for your repositories, Devtron will clone your code at those locations and these checkout paths can be referenced in the docker file to create docker image for the application. Whenever a change is pushed to any the configured repositories, the CI will be triggered and a new docker image file will be built based on the latest commits of the configured repositories and pushed to the container registry.

This chart creates a deployment that runs multiple replicas of your application and automatically replaces any instances that fail or become unresponsive. It does not support Blue/Green and Canary deployments.

This is the default deployment chart. You can select Deployment chart when you want to use only basic use cases which contain the following:

Create a Deployment to rollout a ReplicaSet. The ReplicaSet creates Pods in the background. Check the status of the rollout to see if it succeeds or not.

Declare the new state of the Pods. A new ReplicaSet is created and the Deployment manages moving the Pods from the old ReplicaSet to the new one at a controlled rate. Each new ReplicaSet updates the revision of the Deployment.

Rollback to an earlier Deployment revision if the current state of the Deployment is not stable. Each rollback updates the revision of the Deployment.

Scale up the Deployment to facilitate more load.

Use the status of the Deployment as an indicator that a rollout has stuck.

Clean up older ReplicaSets that you do not need anymore.

You can define application behavior by providing information in the following sections:

Make sure Global Configuration > GitOps is configured before moving ahead.

A deployment configuration is a manifest for the application. It defines the runtime behavior of the application.

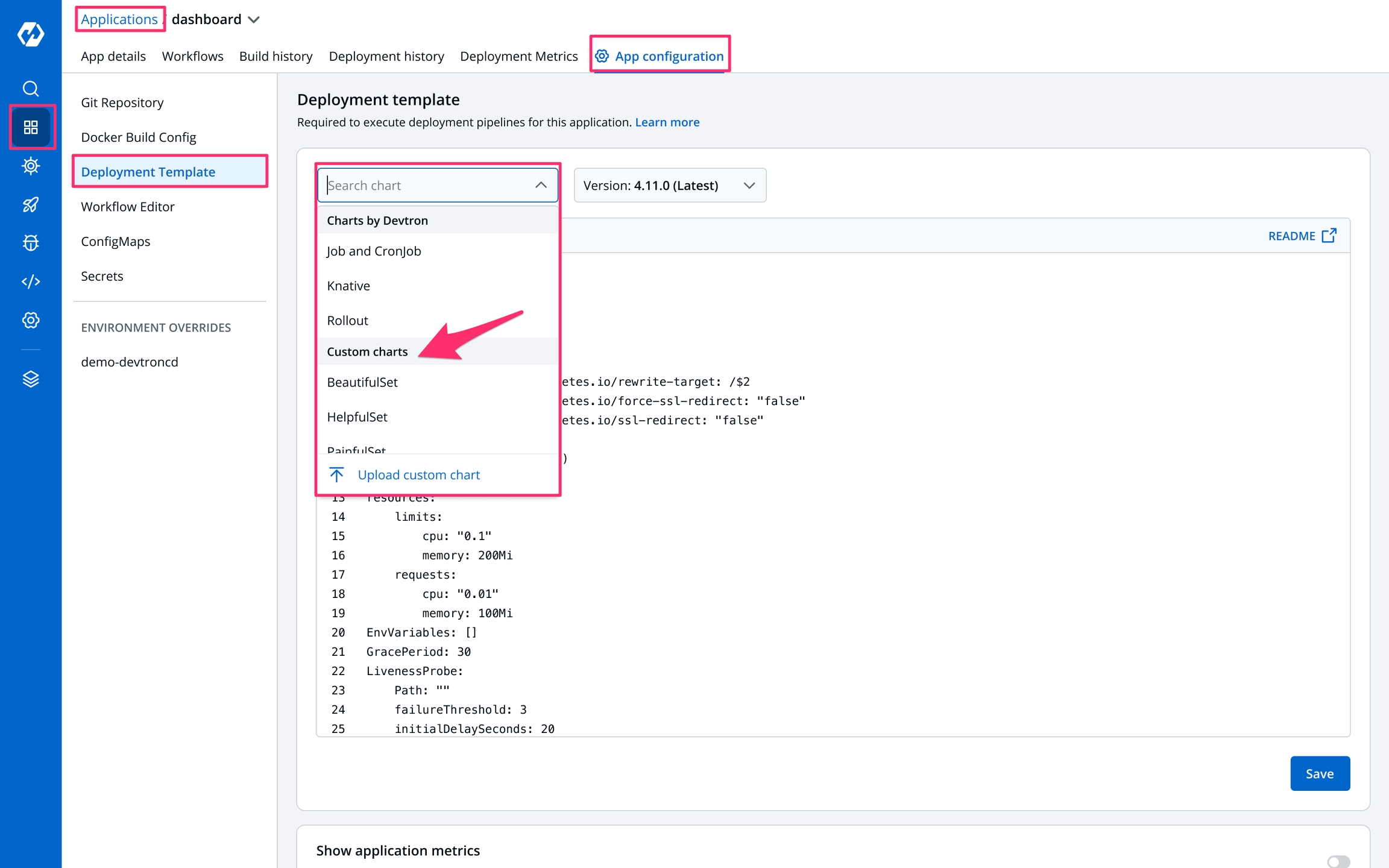

Devtron includes deployment template for both default as well as custom charts created by a super admin.

To configure a deployment chart for your application:

Go to Applications and create a new application.

Go to App Configuration page and configure your application.

On the Deployment Template page, select the drop-down under Chart type.

You can select a chart in one of the following ways:

(Recommended)

Knative

Custom charts are added by a super admin from the section.

Users can select the available custom charts from the drop-down list.

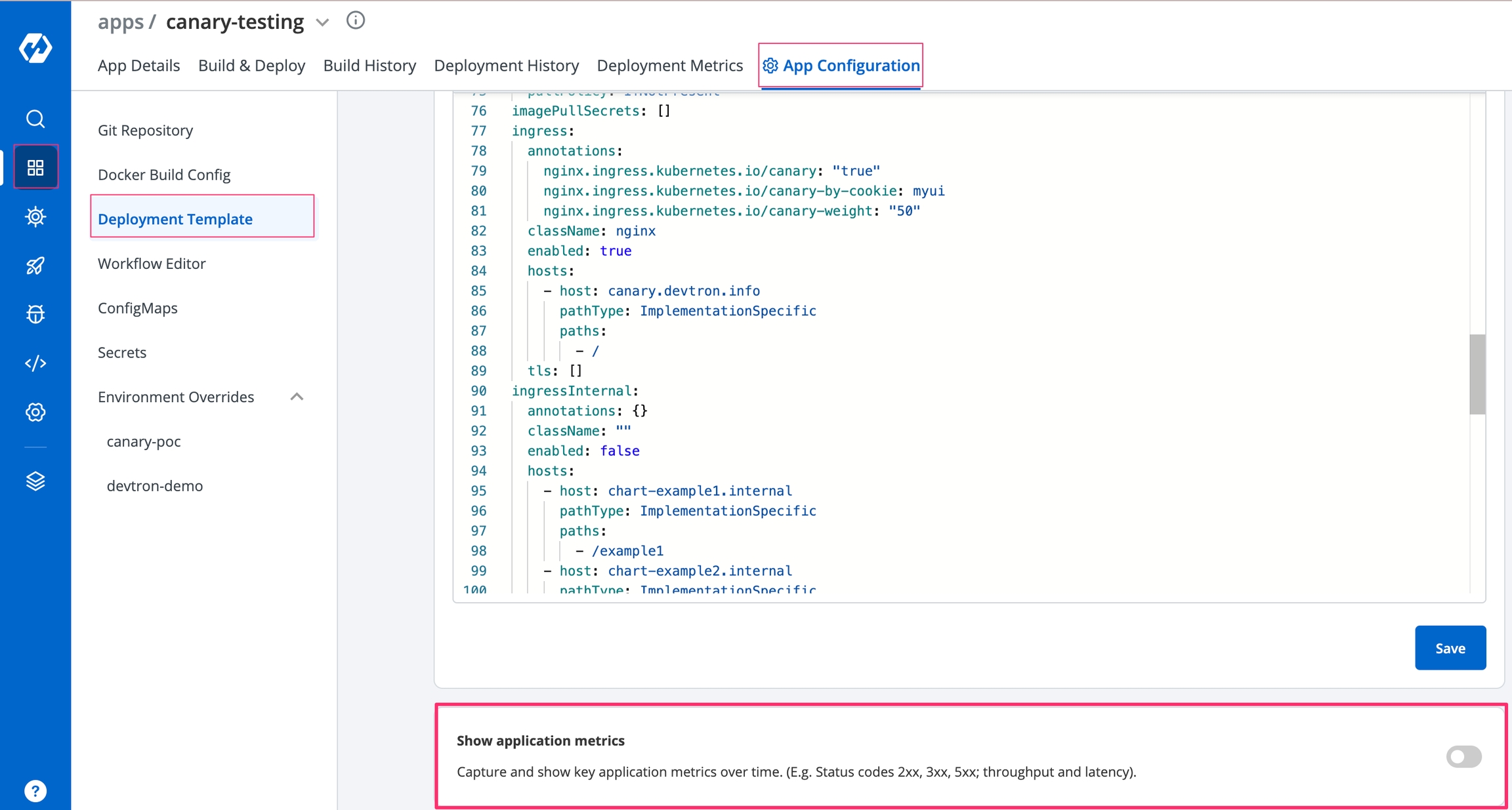

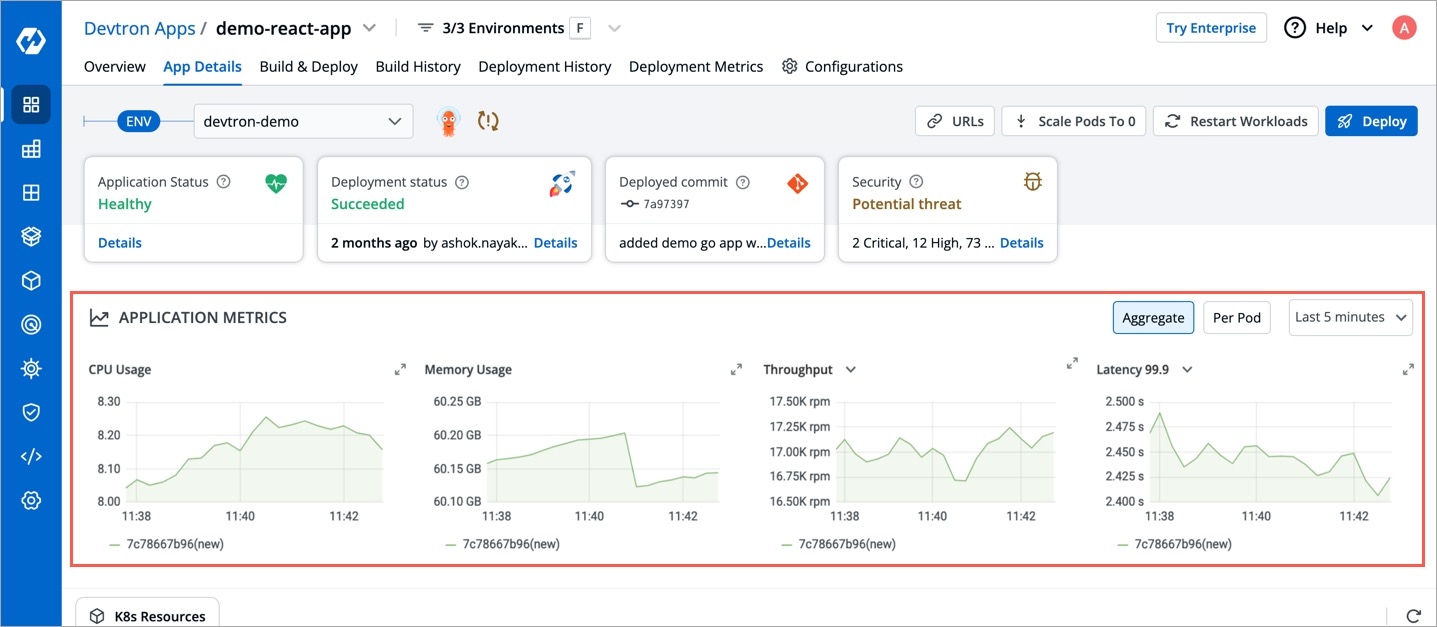

Enable show application metrics toggle to view the application metrics on the App Details page.

IMPORTANT: Enabling Application metrics introduces a sidecar container to your main container which may require some additional configuration adjustments, we recommend you to do load test after enabling it in a non-prod environment before enabling it in production environment.

Select Save to save your configurations.

Please configure Global Configurations before moving ahead with App Configuration

Parts of Documentation

A can be uploaded by a super admin.

Chart version

Select the Chart Version using which you want to deploy the application. Refer Chart Version section for more detail.

Basic Configuration

You can select the basic deployment configuration for your application on the Basic GUI section instead of configuring the YAML file. Refer Basic Configuration section for more detail.

Advanced (YAML)

If you want to do additional configurations, then click Advanced (YAML) for modifications. Refer Advanced (YAML) section for more detail.

Show application metrics

You can enable Show application metrics to see your application's metrics-CPU Service Monitor usage, Memory Usage, Status, Throughput and Latency.

Refer Application Metrics for more detail.

A Job creates one or more Pods and will continue to retry execution of the Pods until a specified number of them successfully terminate. As pods successfully complete, the Job tracks the successful completions. When a specified number of successful completions is reached, the task (ie, Job) is complete. Deleting a Job will clean up the Pods it created. Suspeding a Job will delete its active Pods until the Job is resumed again.

activeDeadlineSeconds

Another way to terminate a Job is by setting an active deadline. Do this by setting the activeDeadlineSeconds field of the Job to a number of seconds. The activeDeadlineSeconds applies to the duration of the job, no matter how many Pods are created. Once a Job reaches activeDeadlineSeconds, all of its running Pods are terminated and the Job status will become type: Failed with reason: DeadlineExceeded.

backoffLimit

There are situations where you want to fail a Job after some amount of retries due to a logical error in configuration etc. To do so, set backoffLimit to specify the number of retries before considering a Job as failed. The back-off limit is set by default to 6. Failed Pods associated with the Job are recreated by the Job controller with an exponential back-off delay (10s, 20s, 40s ...) capped at six minutes. The back-off count is reset when a Job's Pod is deleted or successful without any other Pods for the Job failing around that time.

completions

Jobs with fixed completion count - that is , jobs that have non null completions - can have a completion mode that is specified in completionMode.

parallelism

The requested parallelism can be set to any non-negative value. If it is unspecified, it defaults to 1. If it is specified as 0, then the Job is effectively paused until it is increased.

suspend

The suspend field is also optional. If it is set to true, all subsequent executions are suspended. This setting does not apply to already started executions. Defaults to false.

ttlSecondsAfterFinished

The TTL controller only supports Jobs for now. A cluster operator can use this feature to clean up finished Jobs (either Complete or Failed) automatically by specifying the ttlSecondsAfterFinished field of a Job, as in this example. The TTL controller will assume that a resource is eligible to be cleaned up TTL seconds after the resource has finished, in other words, when the TTL has expired. When the TTL controller cleans up a resource, it will delete it cascadingly, that is to say it will delete its dependent objects together with it. Note that when the resource is deleted, its lifecycle guarantees, such as finalizers, will be honored.

kind

As with all other Kubernetes config, a Job and cronjob needs apiVersion, kind.cronjob and job also needs a section fields which is optional . these fields specify to deploy which job (conjob or job) should be kept. by default, they are set job.

A Cronjob creates Jobs on a repeating schedule , One Cronjob object is like one line of a crontab (cron table) file. It runs a job periodically on a given schedule, written in Cron format. Cronjobs are meant for performing regular scheduled actions such as backups, report generation, and so on. Each of those tasks should be configured to recur indefinitely (for example: once a day / week / month); you can define the point in time within that interval when the job should start.

concurrencyPolicy

A CronJob is counted as missed if it has failed to be created at its scheduled time. For example, If concurrencyPolicy is set to Forbid and a CronJob was attempted to be scheduled when there was a previous schedule still running, then it would count as missed,Acceptable values: Allow / Forbid.

failedJobsHistoryLimit

The failedJobsHistoryLimit fields are optional. These fields specify how many completed and failed jobs should be kept. By default, they are set to 3 and 1 respectively. Setting a limit to 0 corresponds to keeping none of the corresponding kind of jobs after they finish.

restartPolicy

The spec of a Pod has a restartPolicy field with possible values Always, OnFailure, and Never. The default value is Always.The restartPolicy applies to all containers in the Pod. restartPolicy only refers to restarts of the containers by the kubelet on the same node. After containers in a Pod exit, the kubelet restarts them with an exponential back-off delay (10s, 20s, 40s, …), that is capped at five minutes. Once a container has executed for 10 minutes without any problems, the kubelet resets the restart backoff timer for that container, Acceptable values: Always / OnFailure / Never.

schedule

To generate Cronjob schedule expressions, you can also use web tools like https://crontab.guru/.

startingDeadlineSeconds

If startingDeadlineSeconds is set to a large value or left unset (the default) and if concurrencyPolicy is set to Allow, the jobs will always run at least once.

successfulJobsHistoryLimit

The successfulJobsHistoryLimit fields are optional. These fields specify how many completed and failed jobs should be kept. By default, they are set to 3 and 1 respectively. Setting a limit to 0 corresponds to keeping none of the corresponding kind of jobs after they finish.

suspend

The suspend field is also optional. If it is set to true, all subsequent executions are suspended. This setting does not apply to already started executions. Defaults to false.

kind

As with all other Kubernetes config, a Job and cronjob needs apiVersion, kind.cronjob and job also needs a section fields which is optional . these fields specify to deploy which job (conjob or job) should be kept. by default, they are set cronjob.

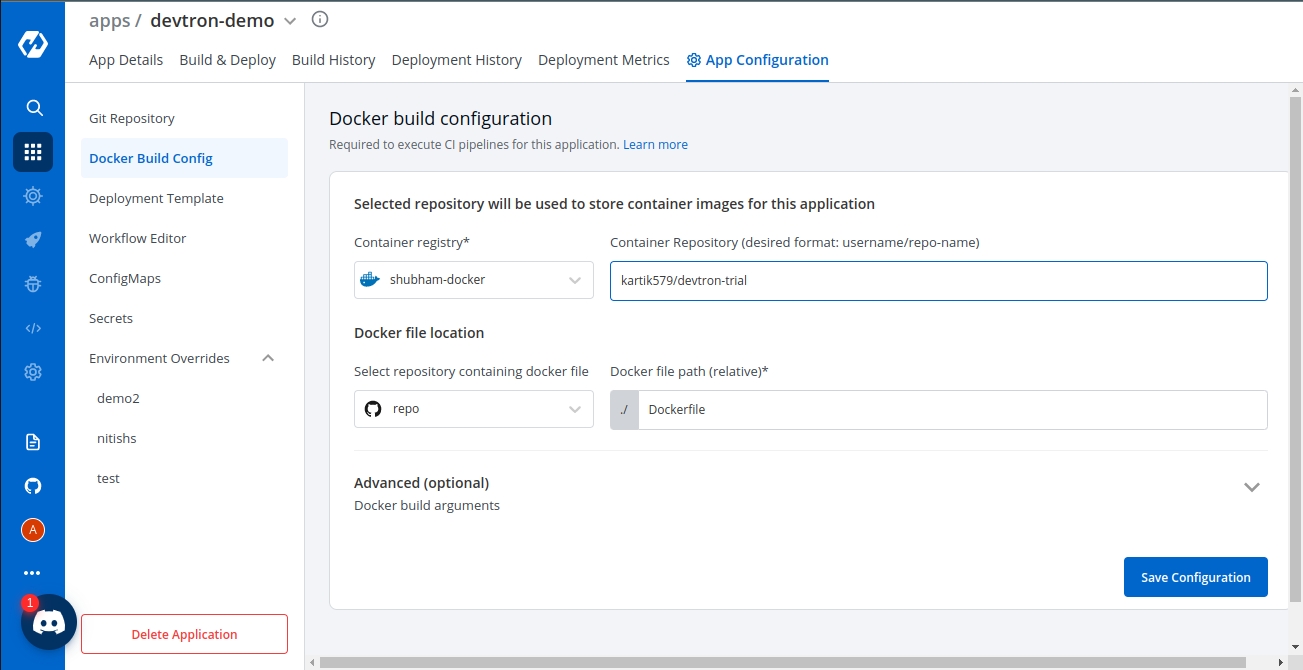

In the previous step, we discussed Git Configurations. In this section, we will provide information on the Docker Build Configuration.

Docker build configuration is used to create and push docker images in the docker registry of your application. You will provide all the docker related information to build and push docker images in this step.

Only one docker image can be created even for multi-git repository applications as explained in the previous step.

To add docker build configuration, You need to provide three sections as given below:

Image store

Checkout path

Advanced

In Image store section, You need to provide two inputs as given below:

Docker registry

Docker repository

Select the docker registry that you wish to use. This registry will be used to store docker images.

In this field, add the name of your docker repository. The repository that you specify here will store a collection of related docker images. Whenever an image is added here, it will be stored with a new tag version.

If you are using docker hub account, you need to enter the repository name along with your username. For example - If my username is kartik579 and repo name is devtron-trial, then enter kartik579/devtron-trial instead of only devtron-trial.

Checkout path including inputs:

Git checkout path

Docker file (relative)

In this field, you have to provide the Git checkout path of your repository. This repository is the same that you had defined earlier in git configuration details.

Here, you provide a relative path where your docker file is located. Ensure that the dockerfile is present on this path.

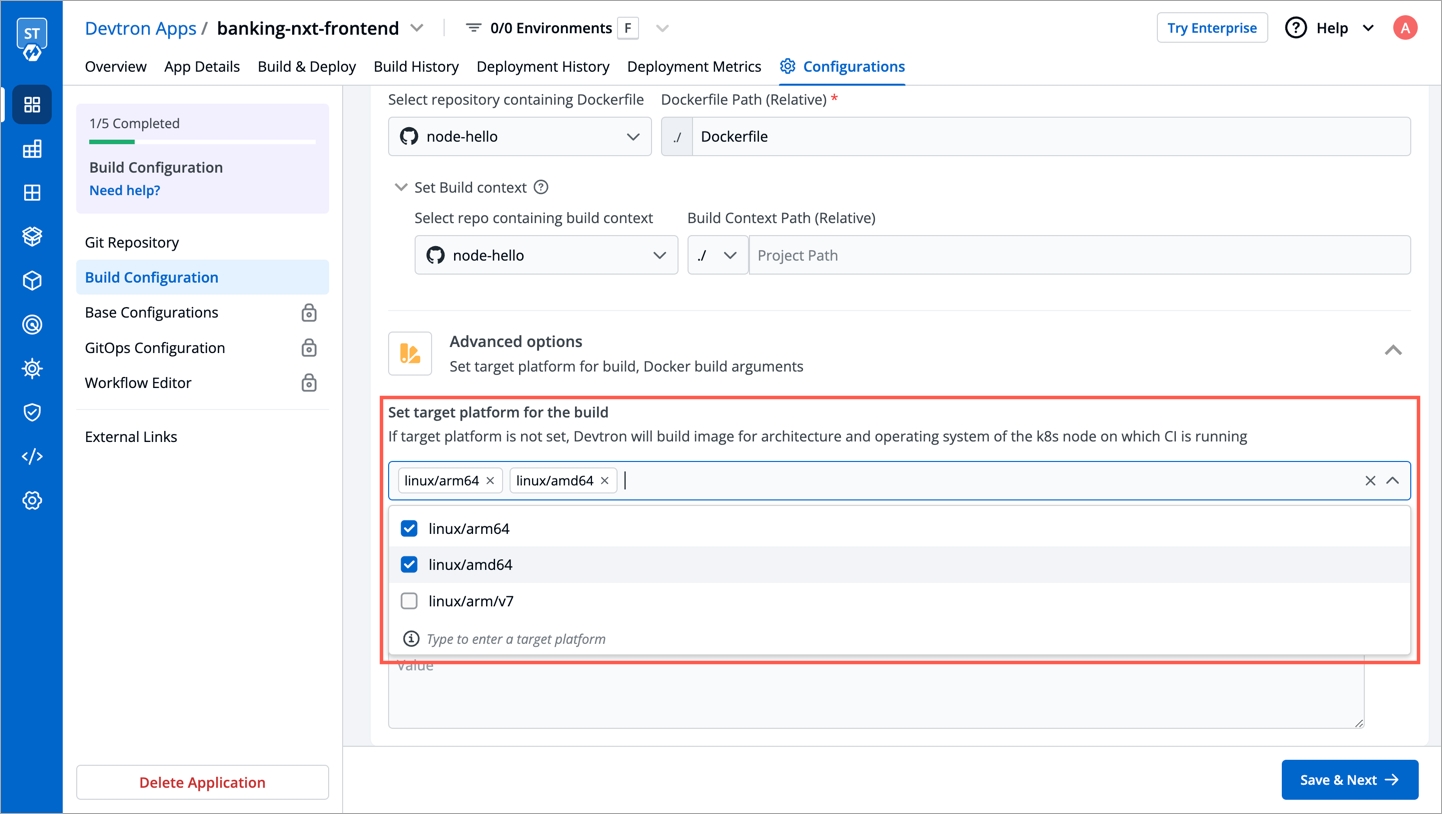

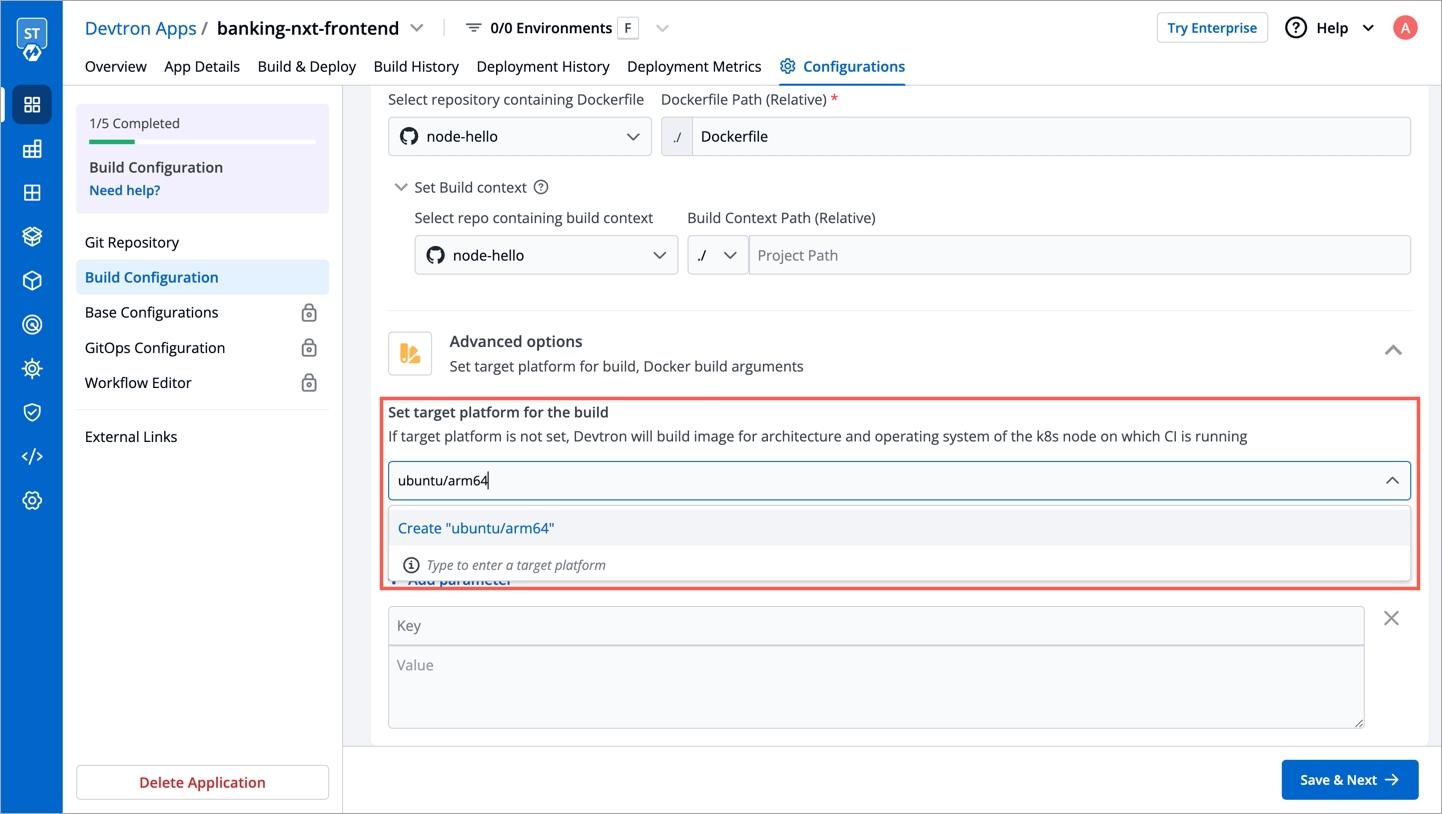

Using this option, users can build images for a specific or multiple architectures and operating systems (target platforms). They can select the target platform from the drop-down or can type to select a custom target platform.

Before selecting a custom target platform, please ensure that the architecture and the operating system is supported by the registry type you are using, otherwise builds will fail. Devtron uses BuildX to build images for mutiple target Platforms, which requires higher CI worker resources. To allocate more resources, you can increase value of the following parameters in the devtron-cm configmap in devtroncd namespace.

LIMIT_CI_CPU

REQ_CI_CPU

REQ_CI_MEM

LIMIT_CI_MEM

To edit the devtron-cm configmap in devtroncd namespace:

If target platform is not set, Devtron will build image for architecture and operating system of the k8s node on which CI is running.

The Target Platform feature might not work in minikube & microk8s clusters as of now.

Docker build arguments is a collapsed view including

Key

Value

This field will contain the key parameter and the value for the specified key for your docker build. This field is Optional. (If required, this can be overridden at CI step later)

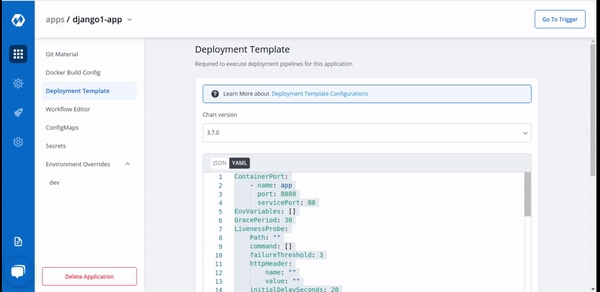

Deployment configuration is the Manifest for the application, it defines the runtime behavior of the application. You can define application behavior by providing information in three sections:

Chart Version

Yaml file

Show application metrics

Chart Version

Select the Chart Version using which you want to deploy the application.

Devtron uses helm charts for the deployments. And we are having multiple chart versions based on features it is supporting with every chart version.

One can see multiple chart version options available in the drop-down. you can select any chart version as per your requirements. By default, the latest version of the helm chart is selected in the chart version option.

Every chart version has its own YAML file. Helm charts are used to provide specifications for your application. To make it easy to use, we have created templates for the YAML file and have added some variables inside the YAML. You can provide or change the values of these variables as per your requirement.

If you want to see Application Metrics (For example Status codes 2xx, 3xx, 5xx; throughput, and latency) for your application, then you need to select the latest chart version.

Application Metrics is not supported for Chart version older than 3.7 version.

This defines ports on which application services will be exposed to other services

envoyPort

envoy port for the container.

envoyTimeout

envoy Timeout for the container,envoy supports a wide range of timeouts that may need to be configured depending on the deployment.By default the envoytimeout is 15s.

idleTimeout

the duration of time that a connection is idle before the connection is terminated.

name

name of the port.

port

port for the container.

servicePort

port of the corresponding kubernetes service.

supportStreaming

Used for high performance protocols like grpc where timeout needs to be disabled.

useHTTP2

Envoy container can accept HTTP2 requests.

EnvVariables provide run-time information to containers and allow to customize how the application works and the behavior of the applications on the system.

Here we can pass the list of env variables , every record is an object which contain the name of variable along with value.

To set environment variables for the containers that run in the Pod.

IMP Docker image should have env variables, whatever we want to set.

But ConfigMap and Secret are the prefered way to inject env variables. So we can create this in App Configuration Section

It is a centralized storage, specific to k8s namespace where key-value pairs are stored in plain text.

It is a centralized storage, specific to k8s namespace where we can store the key-value pairs in plain text as well as in encrypted(Base64) form.

IMP All key-values of Secret and CofigMap will reflect to your application.

If this check fails, kubernetes restarts the pod. This should return error code in case of non-recoverable error.

Path

It define the path where the liveness needs to be checked.

initialDelaySeconds

It defines the time to wait before a given container is checked for liveliness.

periodSeconds

It defines the time to check a given container for liveness.

successThreshold

It defines the number of successes required before a given container is said to fulfil the liveness probe.

timeoutSeconds

It defines the time for checking timeout.

failureThreshold

It defines the maximum number of failures that are acceptable before a given container is not considered as live.

command

The mentioned command is executed to perform the livenessProbe. If the command returns a non-zero value, it's equivalent to a failed probe.

httpHeaders

Custom headers to set in the request. HTTP allows repeated headers,You can override the default headers by defining .httpHeaders for the probe.

scheme

Scheme to use for connecting to the host (HTTP or HTTPS). Defaults to HTTP.

tcp

The kubelet will attempt to open a socket to your container on the specified port. If it can establish a connection, the container is considered healthy.

The maximum number of pods that can be unavailable during the update process. The value of "MaxUnavailable: " can be an absolute number or percentage of the replicas count. The default value of "MaxUnavailable: " is 25%.

The maximum number of pods that can be created over the desired number of pods. For "MaxSurge: " also, the value can be an absolute number or percentage of the replicas count. The default value of "MaxSurge: " is 25%.

This specifies the minimum number of seconds for which a newly created Pod should be ready without any of its containers crashing, for it to be considered available. This defaults to 0 (the Pod will be considered available as soon as it is ready).

If this check fails, kubernetes stops sending traffic to the application. This should return error code in case of errors which can be recovered from if traffic is stopped.

Path

It define the path where the readiness needs to be checked.

initialDelaySeconds

It defines the time to wait before a given container is checked for readiness.

periodSeconds

It defines the time to check a given container for readiness.

successThreshold

It defines the number of successes required before a given container is said to fulfill the readiness probe.

timeoutSeconds

It defines the time for checking timeout.

failureThreshold

It defines the maximum number of failures that are acceptable before a given container is not considered as ready.

command

The mentioned command is executed to perform the readinessProbe. If the command returns a non-zero value, it's equivalent to a failed probe.

httpHeaders

Custom headers to set in the request. HTTP allows repeated headers,You can override the default headers by defining .httpHeaders for the probe.

scheme

Scheme to use for connecting to the host (HTTP or HTTPS). Defaults to HTTP.

tcp

The kubelet will attempt to open a socket to your container on the specified port. If it can establish a connection, the container is considered healthy.

This is connected to HPA and controls scaling up and down in response to request load.

enabled

Set true to enable autoscaling else set false.

MinReplicas

Minimum number of replicas allowed for scaling.

MaxReplicas

Maximum number of replicas allowed for scaling.

TargetCPUUtilizationPercentage

The target CPU utilization that is expected for a container.

TargetMemoryUtilizationPercentage

The target memory utilization that is expected for a container.

extraMetrics

Used to give external metrics for autoscaling.

fullnameOverride replaces the release fullname created by default by devtron, which is used to construct Kubernetes object names. By default, devtron uses {app-name}-{environment-name} as release fullname.

Image is used to access images in kubernetes, pullpolicy is used to define the instances calling the image, here the image is pulled when the image is not present,it can also be set as "Always".

imagePullSecrets contains the docker credentials that are used for accessing a registry.

regcred is the secret that contains the docker credentials that are used for accessing a registry. Devtron will not create this secret automatically, you'll have to create this secret using dt-secrets helm chart in the App store or create one using kubectl. You can follow this documentation Pull an Image from a Private Registry https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/ .

This allows public access to the url, please ensure you are using right nginx annotation for nginx class, its default value is nginx

Legacy deployment-template ingress format

enabled

Enable or disable ingress

annotations

To configure some options depending on the Ingress controller

host

Host name

pathType

Path in an Ingress is required to have a corresponding path type. Supported path types are ImplementationSpecific, Exact and Prefix.

path

Path name

tls

It contains security details

This allows private access to the url, please ensure you are using right nginx annotation for nginx class, its default value is nginx

enabled

Enable or disable ingress

annotations

To configure some options depending on the Ingress controller

host

Host name

pathType

Path in an Ingress is required to have a corresponding path type. Supported path types are ImplementationSpecific, Exact and Prefix.

path

Path name

pathType

Supported path types are ImplementationSpecific, Exact and Prefix.

tls

It contains security details

Specialized containers that run before app containers in a Pod. Init containers can contain utilities or setup scripts not present in an app image. One can use base image inside initContainer by setting the reuseContainerImage flag to true.

To wait for given period of time before switch active the container.

These define minimum and maximum RAM and CPU available to the application.

Resources are required to set CPU and memory usage.

Limits make sure a container never goes above a certain value. The container is only allowed to go up to the limit, and then it is restricted.

Requests are what the container is guaranteed to get.

This defines annotations and the type of service, optionally can define name also.

type

Select the type of service, default ClusterIP

annotations

Annotations are widely used to attach metadata and configs in Kubernetes.

name

Optional field to assign name to service

loadBalancerSourceRanges

If service type is LoadBalancer, Provide a list of whitelisted IPs CIDR that will be allowed to use the Load Balancer.

Note - If loadBalancerSourceRanges is not set, Kubernetes allows traffic from 0.0.0.0/0 to the LoadBalancer / Node Security Group(s).

It is required when some values need to be read from or written to an external disk.

It is used to provide mounts to the volume.

Spec is used to define the desire state of the given container.

Node Affinity allows you to constrain which nodes your pod is eligible to schedule on, based on labels of the node.

Inter-pod affinity allow you to constrain which nodes your pod is eligible to be scheduled based on labels on pods.

Key part of the label for node selection, this should be same as that on node. Please confirm with devops team.

Value part of the label for node selection, this should be same as that on node. Please confirm with devops team.

Taints are the opposite, they allow a node to repel a set of pods.

A given pod can access the given node and avoid the given taint only if the given pod satisfies a given taint.

Taints and tolerations are a mechanism which work together that allows you to ensure that pods are not placed on inappropriate nodes. Taints are added to nodes, while tolerations are defined in the pod specification. When you taint a node, it will repel all the pods except those that have a toleration for that taint. A node can have one or many taints associated with it.

This is used to give arguments to command.

It contains the commands to run inside the container.

enabled

To enable or disable the command.

value

It contains the commands.

workingDir

It is used to specify the working directory where commands will be executed.

Containers section can be used to run side-car containers along with your main container within same pod. Containers running within same pod can share volumes and IP Address and can address each other @localhost.

It is a kubernetes monitoring tool and the name of the file to be monitored as monitoring in the given case.It describes the state of the prometheus.

Accepts an array of Kubernetes objects. You can specify any kubernetes yaml here and it will be applied when your app gets deployed.

Kubernetes waits for the specified time called the termination grace period before terminating the pods. By default, this is 30 seconds. If your pod usually takes longer than 30 seconds to shut down gracefully, make sure you increase the GracePeriod.

A Graceful termination in practice means that your application needs to handle the SIGTERM message and begin shutting down when it receives it. This means saving all data that needs to be saved, closing down network connections, finishing any work that is left, and other similar tasks.

There are many reasons why Kubernetes might terminate a perfectly healthy container. If you update your deployment with a rolling update, Kubernetes slowly terminates old pods while spinning up new ones. If you drain a node, Kubernetes terminates all pods on that node. If a node runs out of resources, Kubernetes terminates pods to free those resources. It’s important that your application handle termination gracefully so that there is minimal impact on the end user and the time-to-recovery is as fast as possible.

It is used for providing server configurations.

It gives the details for deployment.

image_tag

It is the image tag

image

It is the URL of the image

It gives the set of targets to be monitored.

It is used to configure database migration.

Application metrics can be enabled to see your application's metrics-CPUService Monito usage,Memory Usage,Status,Throughput and Latency.

It gives the realtime metrics of the deployed applications

Deployment Frequency

It shows how often this app is deployed to production

Change Failure Rate

It shows how often the respective pipeline fails.

Mean Lead Time

It shows the average time taken to deliver a change to production.

Mean Time to Recovery

It shows the average time taken to fix a failed pipeline.

A service account provides an identity for the processes that run in a Pod.

When you access the cluster, you are authenticated by the API server as a particular User Account. Processes in containers inside pod can also contact the API server. When you are authenticated as a particular Service Account.

When you create a pod, if you do not create a service account, it is automatically assigned the default service account in the namespace.

You can create PodDisruptionBudget for each application. A PDB limits the number of pods of a replicated application that are down simultaneously from voluntary disruptions. For example, an application would like to ensure the number of replicas running is never brought below the certain number.

You can specify maxUnavailable and minAvailable in a PodDisruptionBudget.

With minAvailable of 1, evictions are allowed as long as they leave behind 1 or more healthy pods of the total number of desired replicas.

With maxAvailable of 1, evictions are allowed as long as at most 1 unhealthy replica among the total number of desired replicas.

Envoy is attached as a sidecar to the application container to collect metrics like 4XX, 5XX, Throughput and latency. You can now configure the envoy settings such as idleTimeout, resources etc.

Alerting rules allow you to define alert conditions based on Prometheus expressions and to send notifications about firing alerts to an external service.

In this case, Prometheus will check that the alert continues to be active during each evaluation for 1 minute before firing the alert. Elements that are active, but not firing yet, are in the pending state.

Labels are key/value pairs that are attached to pods. Labels are intended to be used to specify identifying attributes of objects that are meaningful and relevant to users, but do not directly imply semantics to the core system. Labels can be used to organize and to select subsets of objects.

Pod Annotations are widely used to attach metadata and configs in Kubernetes.

HPA, by default is configured to work with CPU and Memory metrics. These metrics are useful for internal cluster sizing, but you might want to configure wider set of metrics like service latency, I/O load etc. The custom metrics in HPA can help you to achieve this.

Wait for given period of time before scaling down the container.

If you want to see application metrics like different HTTP status codes metrics, application throughput, latency, response time. Enable the Application metrics from below the deployment template Save button. After enabling it, you should be able to see all metrics on App detail page. By default it remains disabled.

Once all the Deployment template configurations are done, click on Save to save your deployment configuration. Now you are ready to create Workflow to do CI/CD.

Helm Chart json schema is used to validate the deployment template values.

reference-chart_3-12-0

reference-chart_3-11-0

reference-chart_3-10-0

reference-chart_3-9-0

The values of CPU and Memory in limits must be greater than or equal to in requests respectively. Similarly, In case of envoyproxy, the values of limits are greater than or equal to requests as mentioned below.

KEDA is a Kubernetes-based Event Driven Autoscaler. With KEDA, you can drive the scaling of any container in Kubernetes based on the number of events needing to be processed. KEDA can be installed into any Kubernetes cluster and can work alongside standard Kubernetes components like the Horizontal Pod Autoscaler(HPA).

Example for autosccaling with KEDA using Prometheus metrics is given below:

Example for autosccaling with KEDA based on kafka is given below :

A security context defines privilege and access control settings for a Pod or Container.

To add a security context for main container:

To add a security context on pod level:

You can use topology spread constraints to control how Pods are spread across your cluster among failure-domains such as regions, zones, nodes, and other user-defined topology domains. This can help to achieve high availability as well as efficient resource utilization.

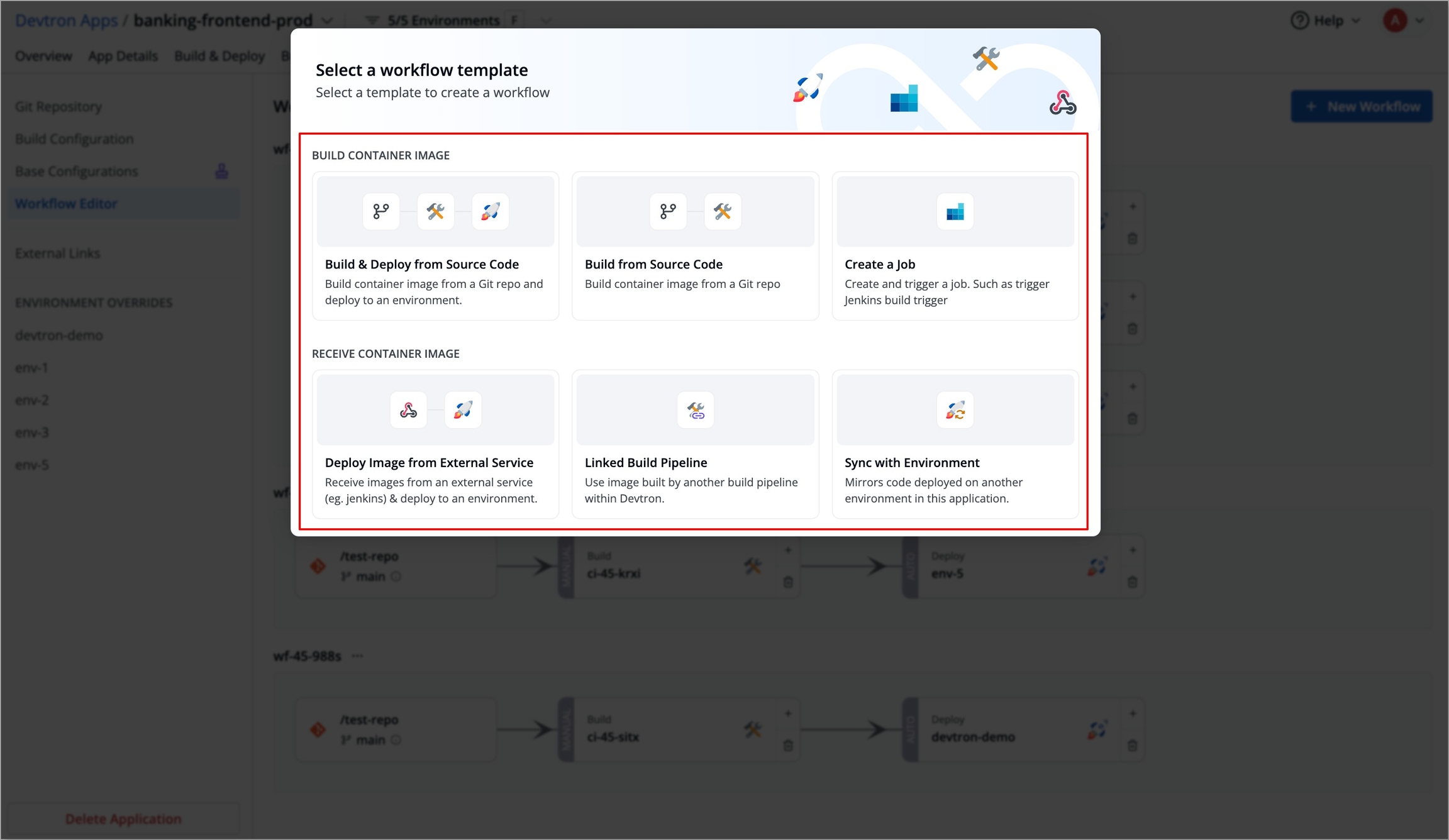

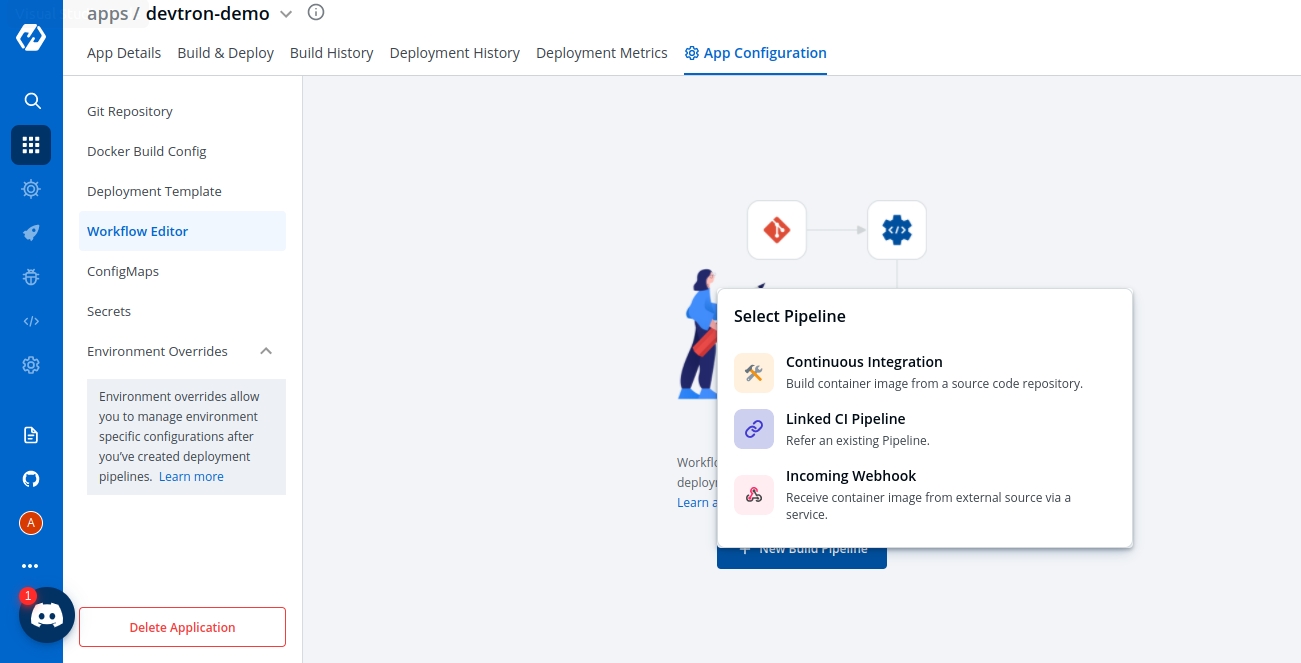

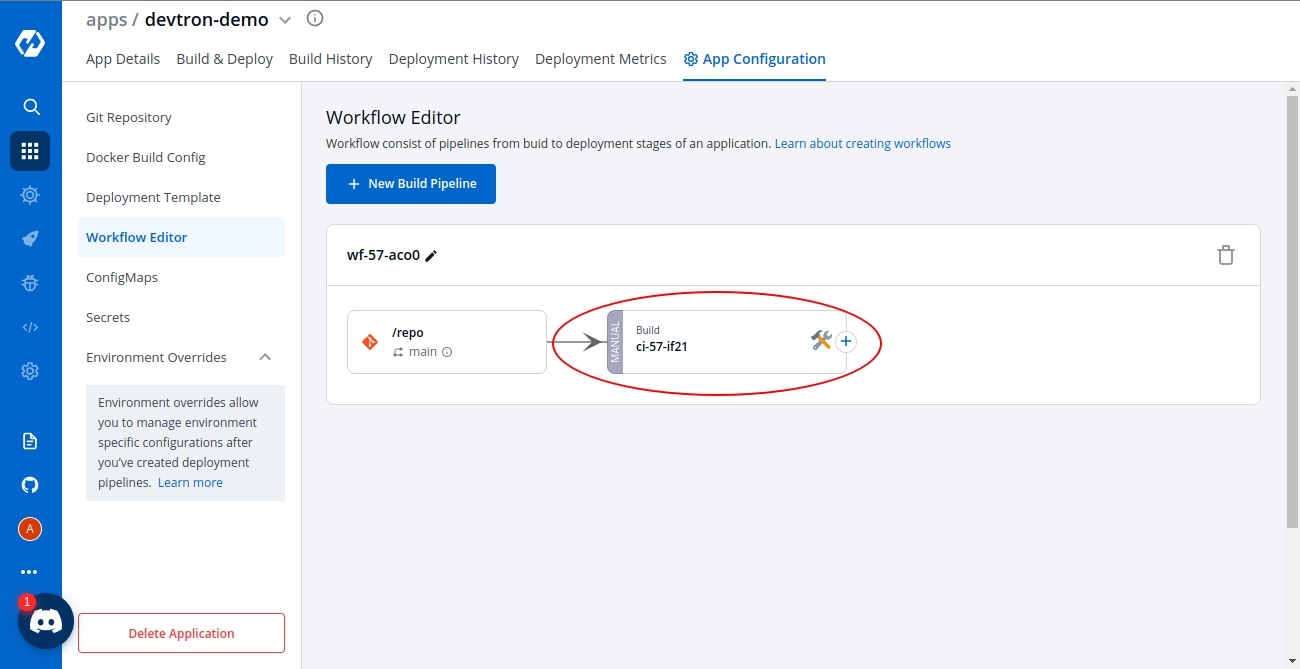

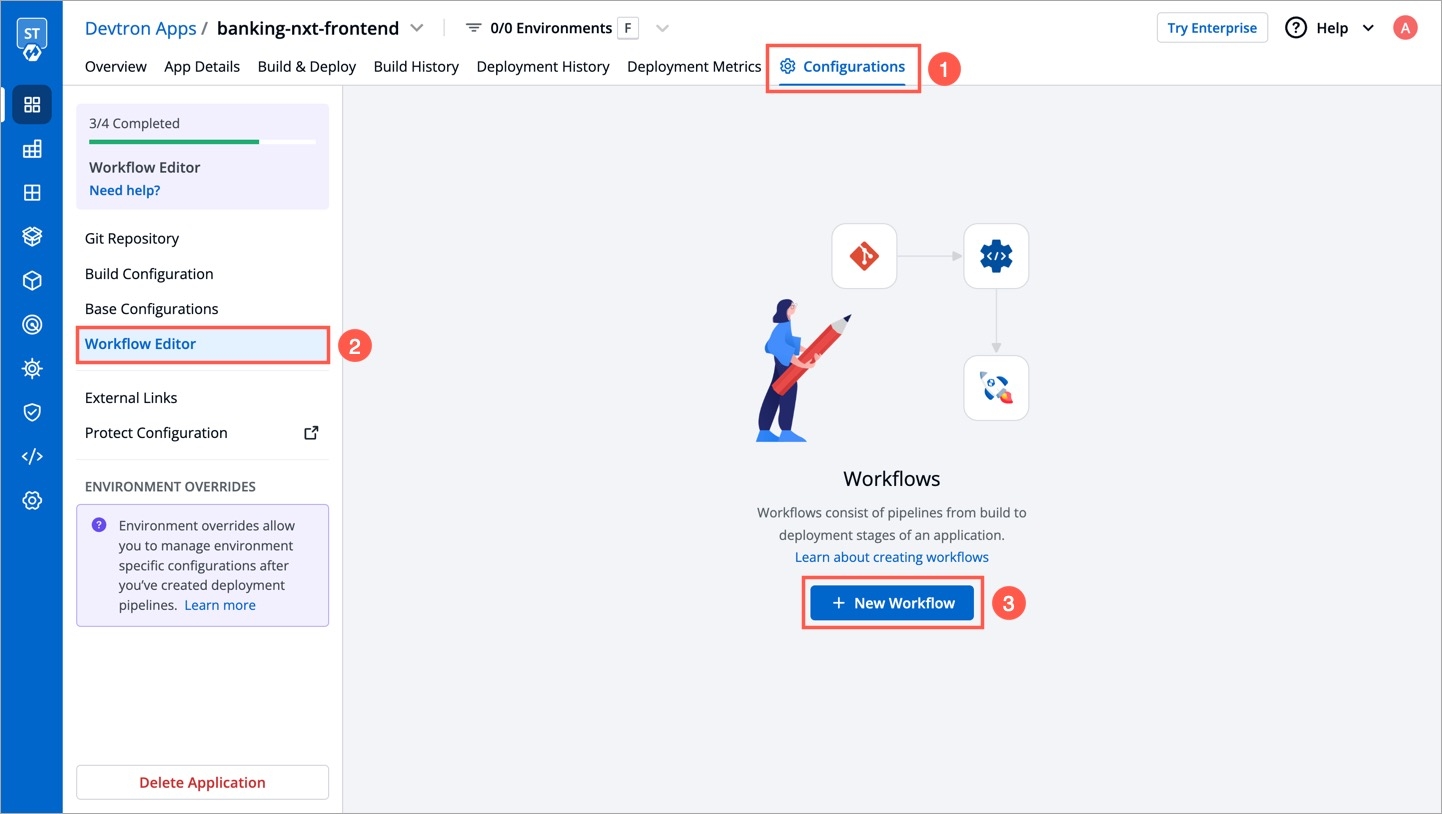

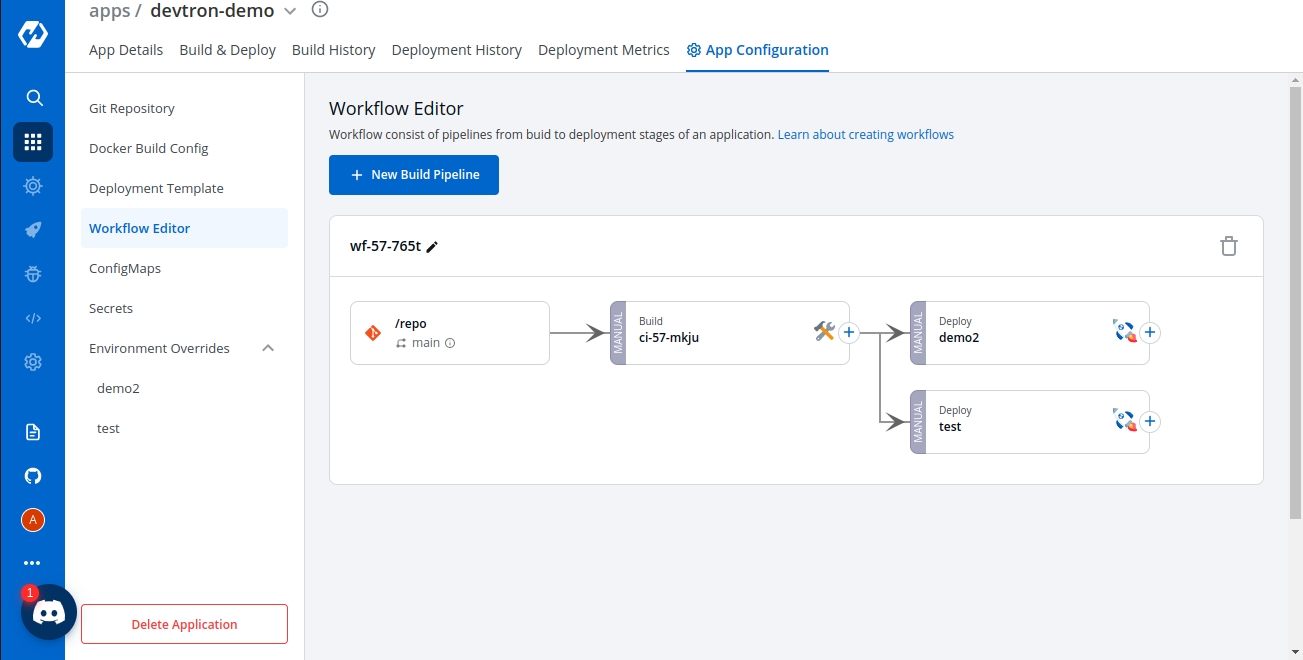

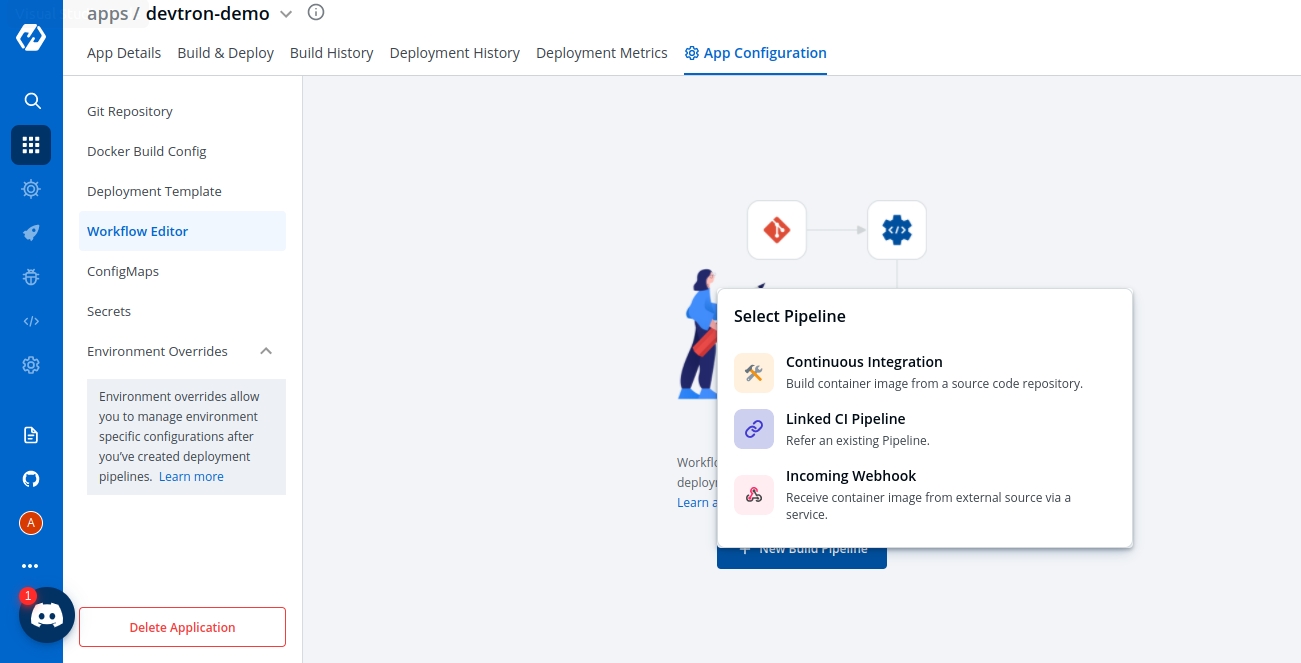

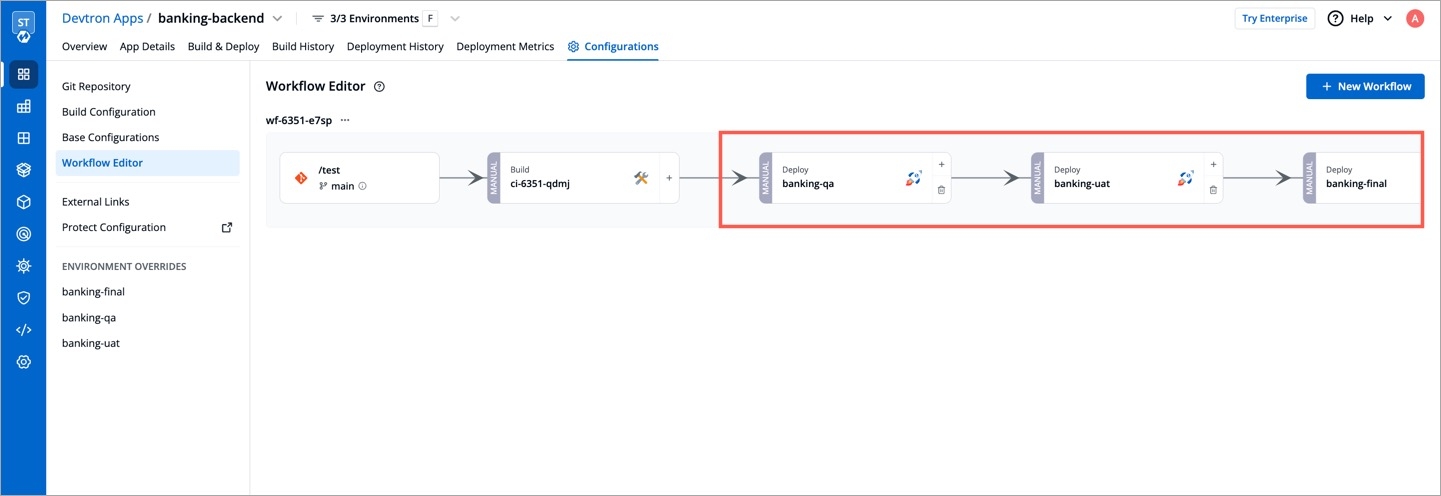

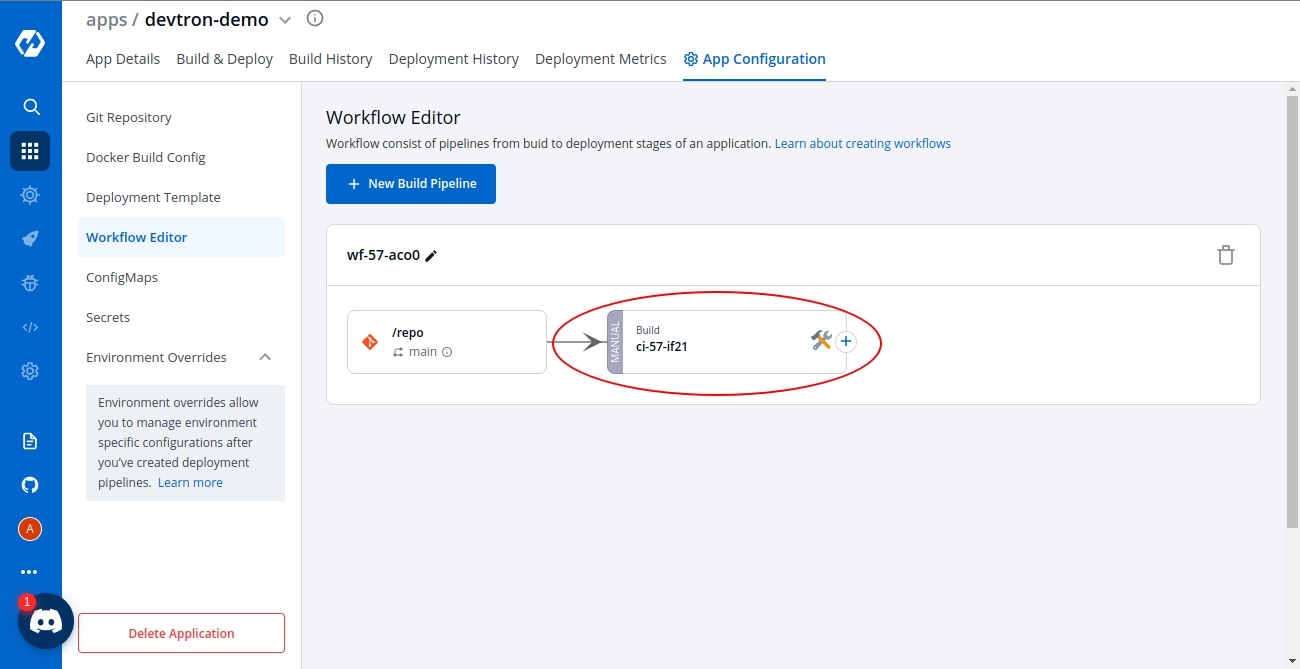

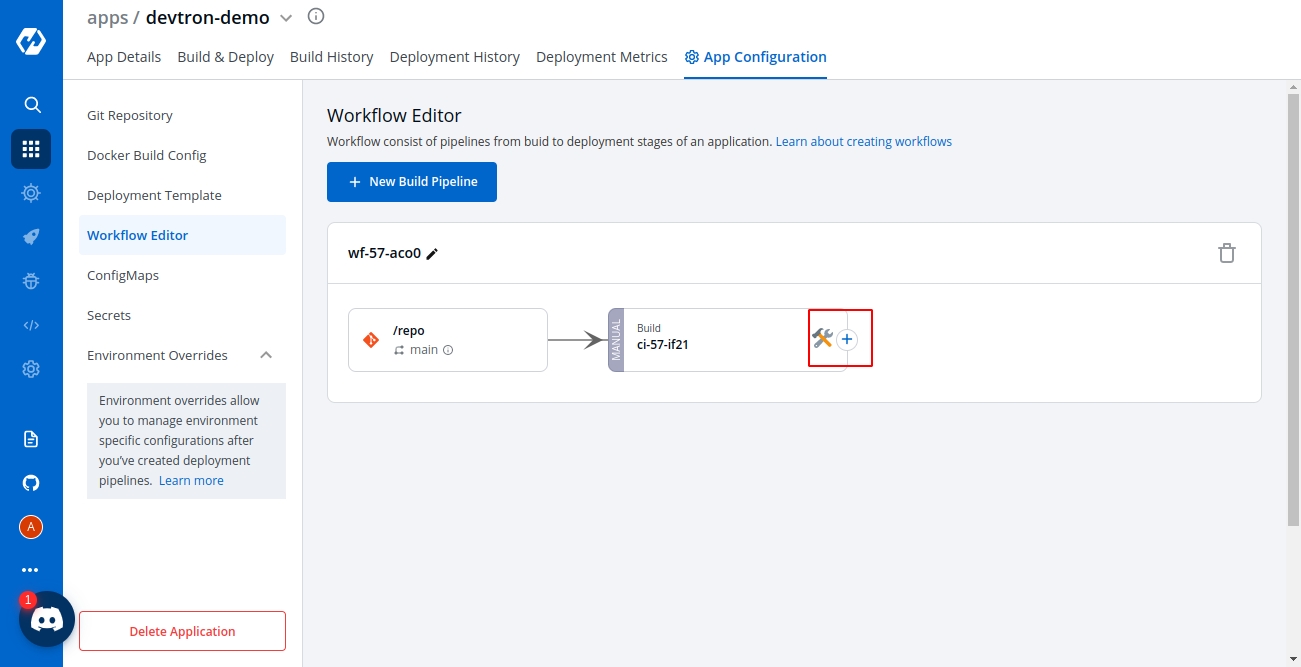

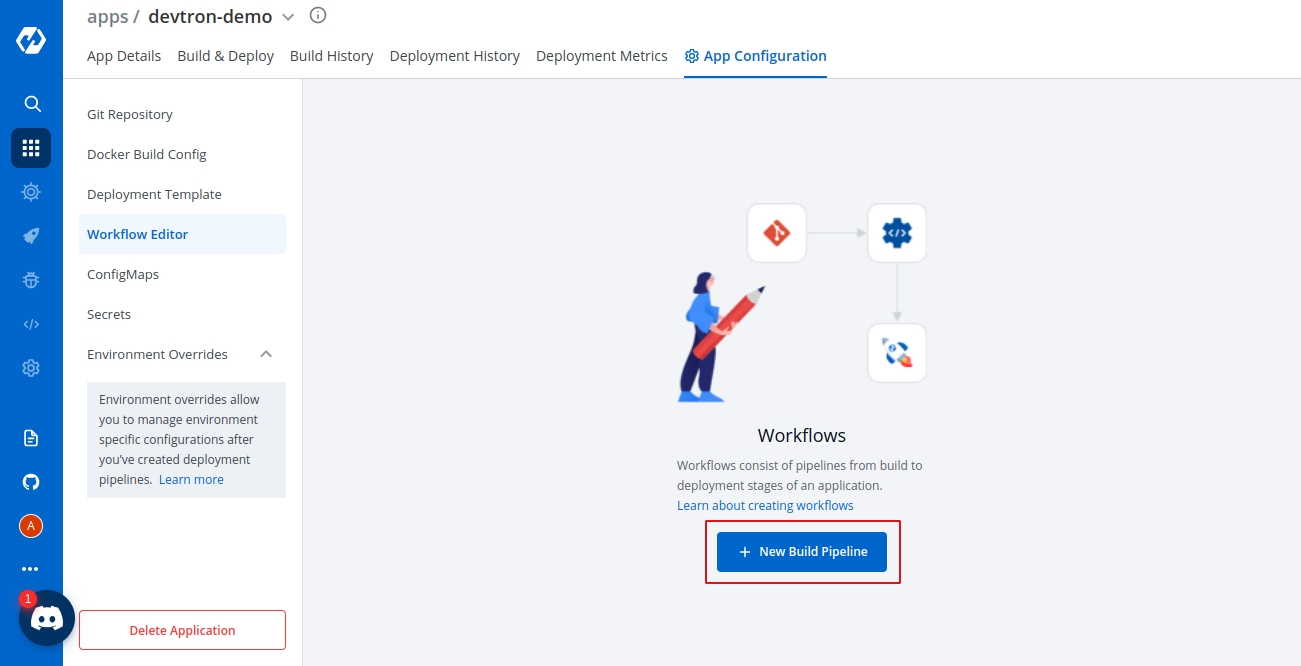

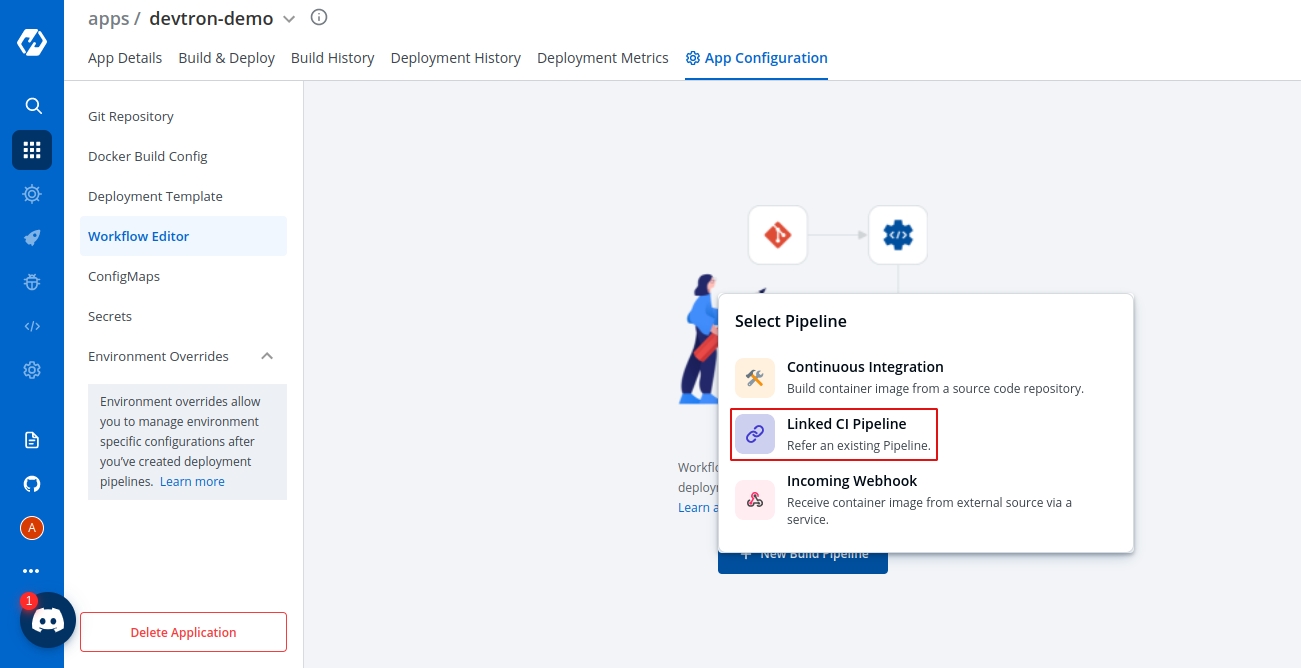

Workflow is a logical sequence of different stages used for continuous integration and continuous deployment of an application.

Click on New Build Pipeline to create a new workflow

On clicking New Build Pipeline, three options appear as mentioned below:

Continuous Integration: Choose this option if you want Devtron to build the image of source code.

Linked CI Pipeline: Choose this option if you want to use an image created by an existing CI pipeline in Devtron.

Incoming Webhook: Choose this if you want to build your image outside Devtron, it will receive a docker image from an external source via the incoming webhook.

Then, create CI/CD Pipelines for your application.

To know how to create the CI pipeline for your application, click on: Create CI Pipelines

To know how to create the CD pipeline for your application, click on: Create CD Pipelines

Info:

For Devtron version older than v0.4.0, please refer the page.

A CI Pipeline can be created in one of the three ways:

Each of these methods has different use-cases that can be tailored to the needs of the organization.

Continuous Integration Pipeline allows you to build the container image from a source code repository.

From the Applications menu, select your application.

On the App Configuration page, select Workflow Editor.

Select + New Build Pipeline.

Select Continuous Integration.

Enter the following fields on the Create build pipeline screen:

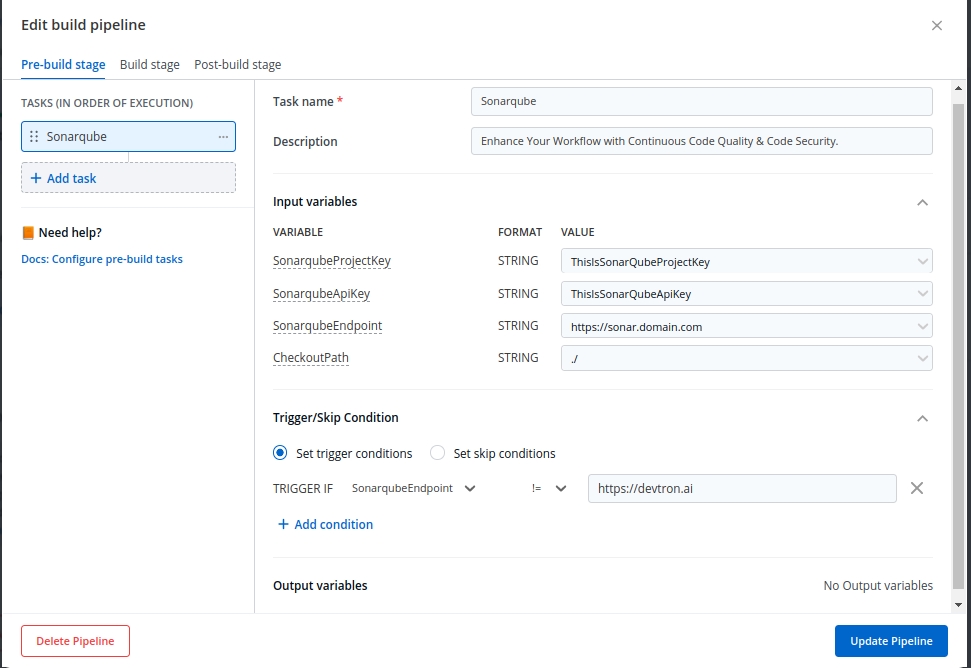

The advanced CI Pipeline includes the following stages:

Pre-build stage: The tasks in this stage run before the image is built.

Build stage: In this stage, the build is triggered from the source code that you provide.

Post-build stage: The tasks in this stage are triggered once the build is complete.

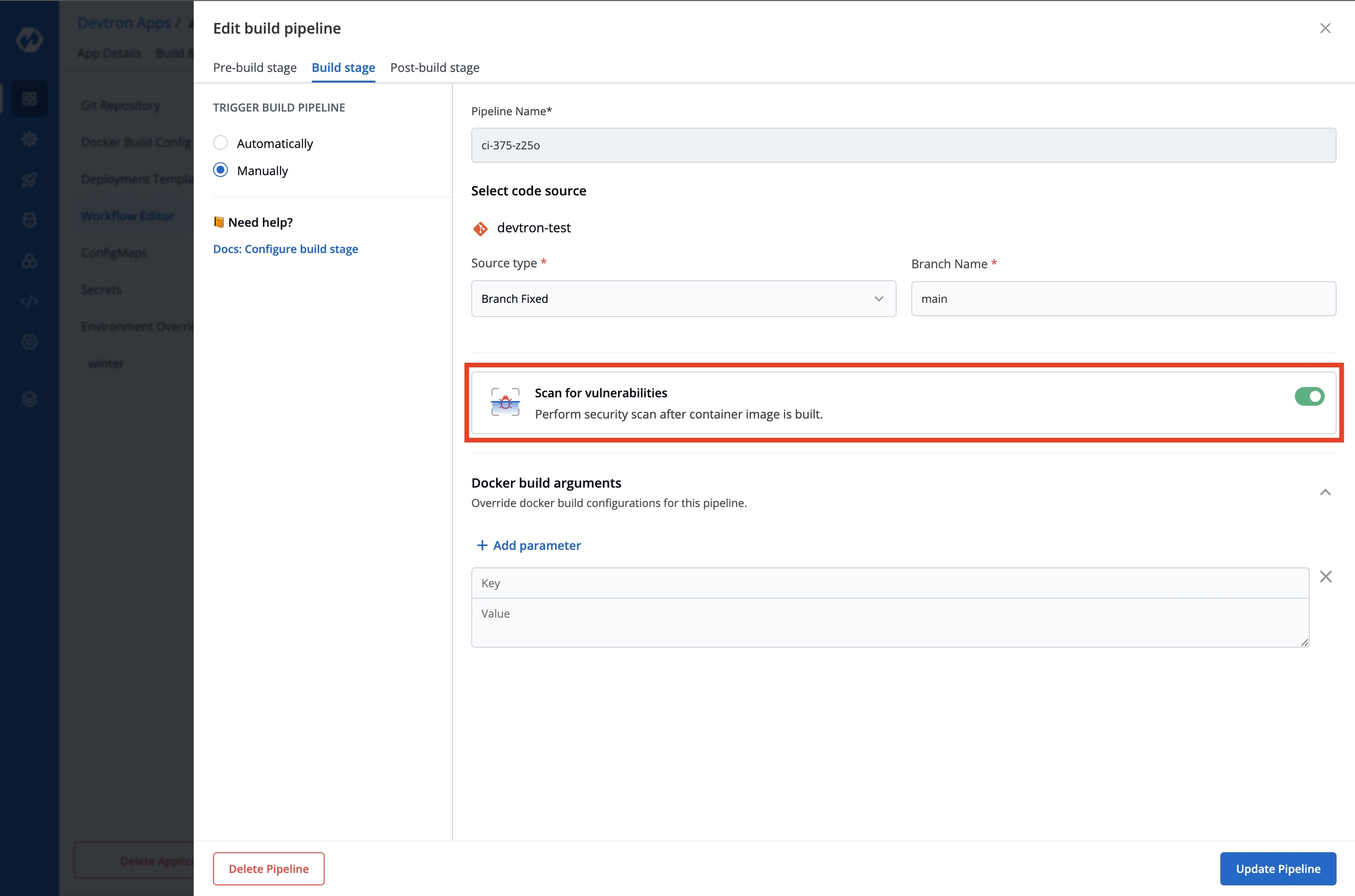

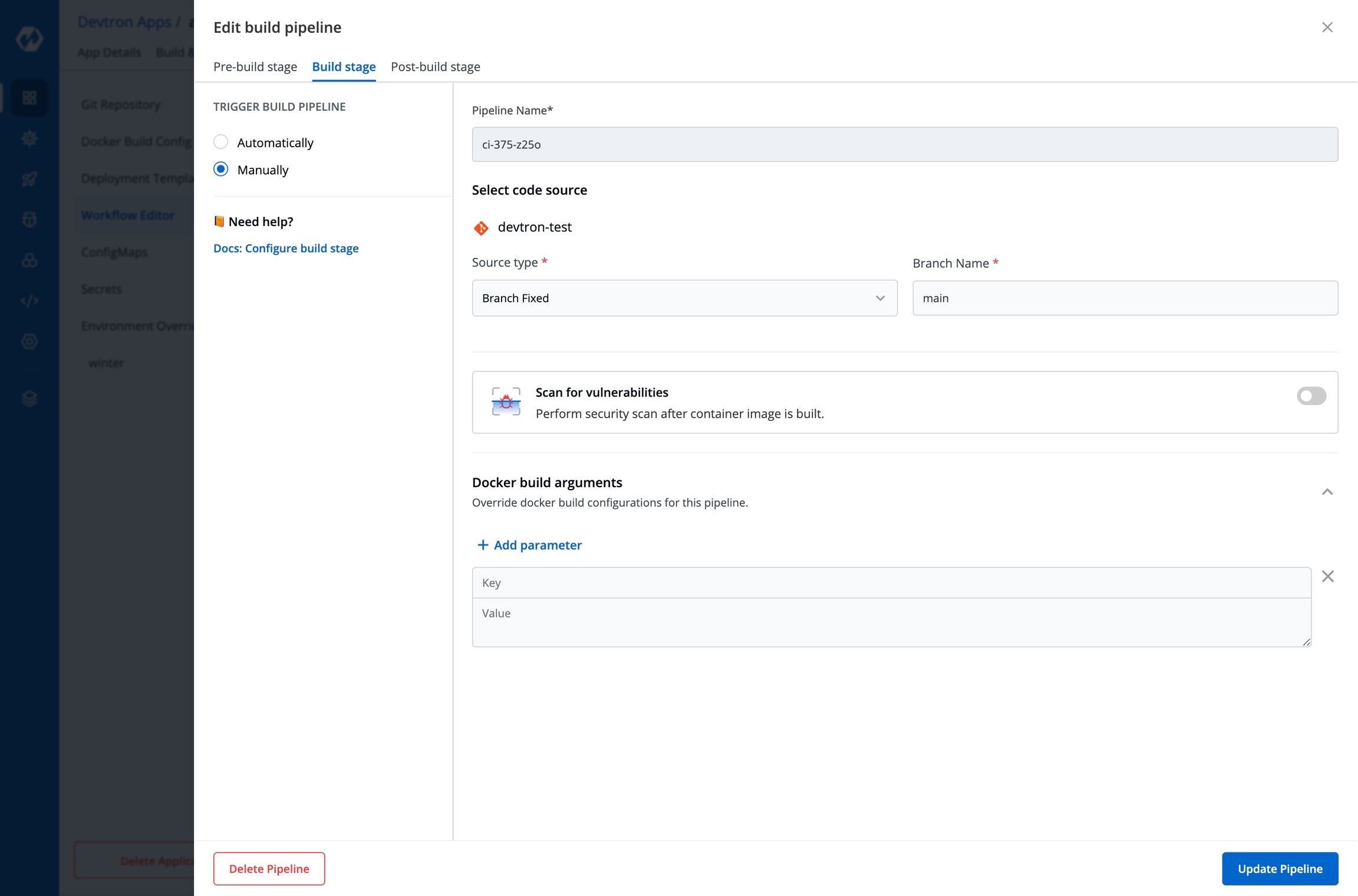

To Perform the security scan after the container image is built, enable the Scan for vulnerabilities toggle in the build stage.

The Build stage allows you to configure a build pipeline from the source code.

From the Create build pipeline screen, select Advanced Options.

Select Build stage.

Select Update Pipeline.

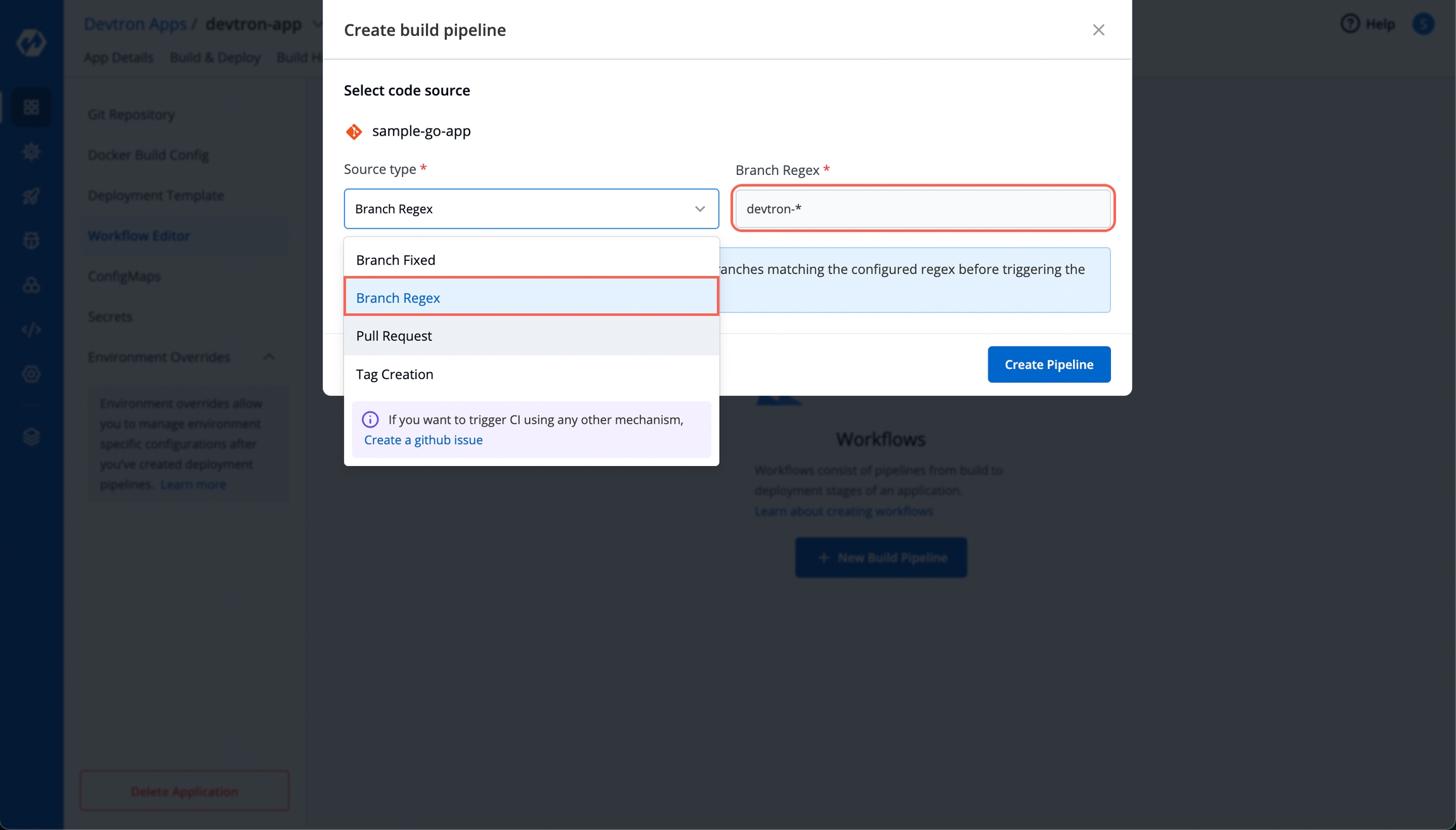

The Source type - "Branch Fixed" allows you to trigger a CI build whenever there is a code change on the specified branch.

Select the Source type as "Branch Fixed" and enter the Branch Name.

Branch Regex allows users to easily switch between branches matching the configured Regex before triggering the build pipeline. In case of Branch Fixed, users cannot change the branch name in ci-pipeline unless they have admin access for the app. So, if users with Build and Deploy access should be allowed to switch branch name before triggering ci-pipeline, Branch Regex should be selected as source type by a user with Admin access.

For example if the user sets the Branch Regex as feature-*, then users can trigger from branches such as feature-1450, feature-hot-fix etc.

Info: If you choose "Pull Request" or "Tag Creation" as the source type, you must first configure the Webhook for GitHub/Bitbucket as a prerequisite step.

Go to the Settings page of your repository and select Webhooks.

Select Add webhook.

In the Payload URL field, enter the Webhook URL that you get on selecting the source type as "Pull Request" or "Tag Creation" in Devtron the dashboard.

Change the Content-type to application/json.

In the Secret field, enter the secret from Devtron the dashboard when you select the source type as "Pull Request" or "Tag Creation".

Under Which events would you like to trigger this webhook?, select Let me select individual events. to trigger the webhook to build CI Pipeline.

Select Branch or tag creation and Pull Requests.

Select Add webhook.

Go to the Repository settings page of your Bitbucket repository.

Select Webhooks and then select Add webhook.

Enter a Title for the webhook.

In the URL field, enter the Webhook URL that you get on selecting the source type as "Pull Request" or "Tag Creation" in the Devtron dashboard.

Select the event triggers for which you want to trigger the webhook.

Select Save to save your configurations.

The Source type - "Pull Request" allows you to configure the CI Pipeline using the PR raised in your repository.

To trigger the build from specific PRs, you can filter the PRs based on the following keys:

Select the appropriate filter and pass the matching condition as a regular expression (regex).

Select Create Pipeline.

The Source type - "Tag Creation" allows you to build the CI pipeline from a tag.

To trigger the build from specific tags, you can filter the tags based on the author and/or the tag name.

Select the appropriate filter and pass the matching condition as a regular expression (regex).

Select Create Pipeline.

Note

(a) You can provide pre-build and post-build stages via the Devtron tool’s console or can also provide these details by creating a file

devtron-ci.yamlinside your repository. There is a pre-defined format to write this file. And we will run these stages using this YAML file. You can also provide some stages on the Devtron tool’s console and some stages in the devtron-ci.yaml file. But stages defined through theDevtrondashboard are first executed then the stages defined in thedevtron-ci.yamlfile.(b) The total timeout for the execution of the CI pipeline is by default set as 3600 seconds. This default timeout is configurable according to the use case. The timeout can be edited in the configmap of the orchestrator service in the env variable as

env:"DEFAULT_TIMEOUT" envDefault:"3600"

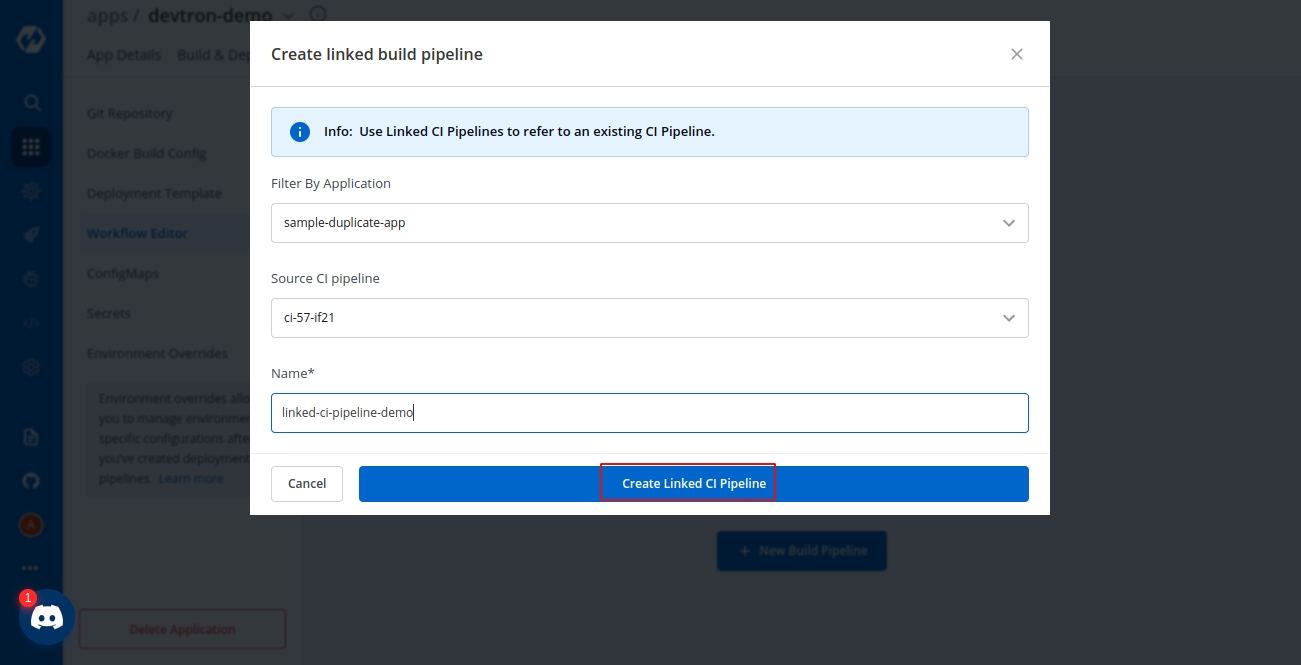

If one code is shared across multiple applications, Linked CI Pipeline can be used, and only one image will be built for multiple applications because if there is only one build, it is not advisable to create multiple CI Pipelines.

From the Applications menu, select your application.

On the App Configuration page, select Workflow Editor.

Select + New Build Pipeline.

Select Linked CI Pipeline.

Enter the following fields on the Create linked build pipeline screen:

Select the application in which the source CI pipeline is present.

Select the source CI pipeline from the application that you selected above.

Enter a name for the linked CI pipeline.

Select Create Linked CI Pipeline.

After creating a linked CI pipeline, you can create a CD pipeline. Builds cannot be triggered from a linked CI pipeline; they can only be triggered from the source CI pipeline. There will be no images to deploy in the CD pipeline created from the 'linked CI pipeline' at first. To see the images in the CD pipeline of the linked CI pipeline, trigger build in the source CI pipeline. The build images will now be listed in the CD pipeline of the 'linked CI pipeline' whenever you trigger a build in the source CI pipeline.

The CI pipeline receives container images from an external source via a webhook service.

You can use Devtron for deployments on Kubernetes while using your CI tool such as Jenkins. External CI features can be used when the CI tool is hosted outside the Devtron platform.

From the Applications menu, select your application.

On the App Configuration page, select Workflow Editor.

Select + New Build Pipeline.

Select Incoming Webhook.

Select Save and Generate URL. This generates the Payload format and Webhook URL.

You can send the Payload script to your CI tools such as Jenkins and Devtron will receive the build image every time the CI Service is triggered or you can use the Webhook URL which will build an image every time CI Service is triggered using Devtron Dashboard.

You can update the configurations of an existing CI Pipeline except for the pipeline's name. To update a pipeline, select your CI pipeline. In the Edit build pipeline window, edit the required stages and select Update Pipeline.

You can only delete a CI pipeline if there is no CD pipeline created in your workflow.

To delete a CI pipeline, go to App Configurations > Workflow Editor and select Delete Pipeline.

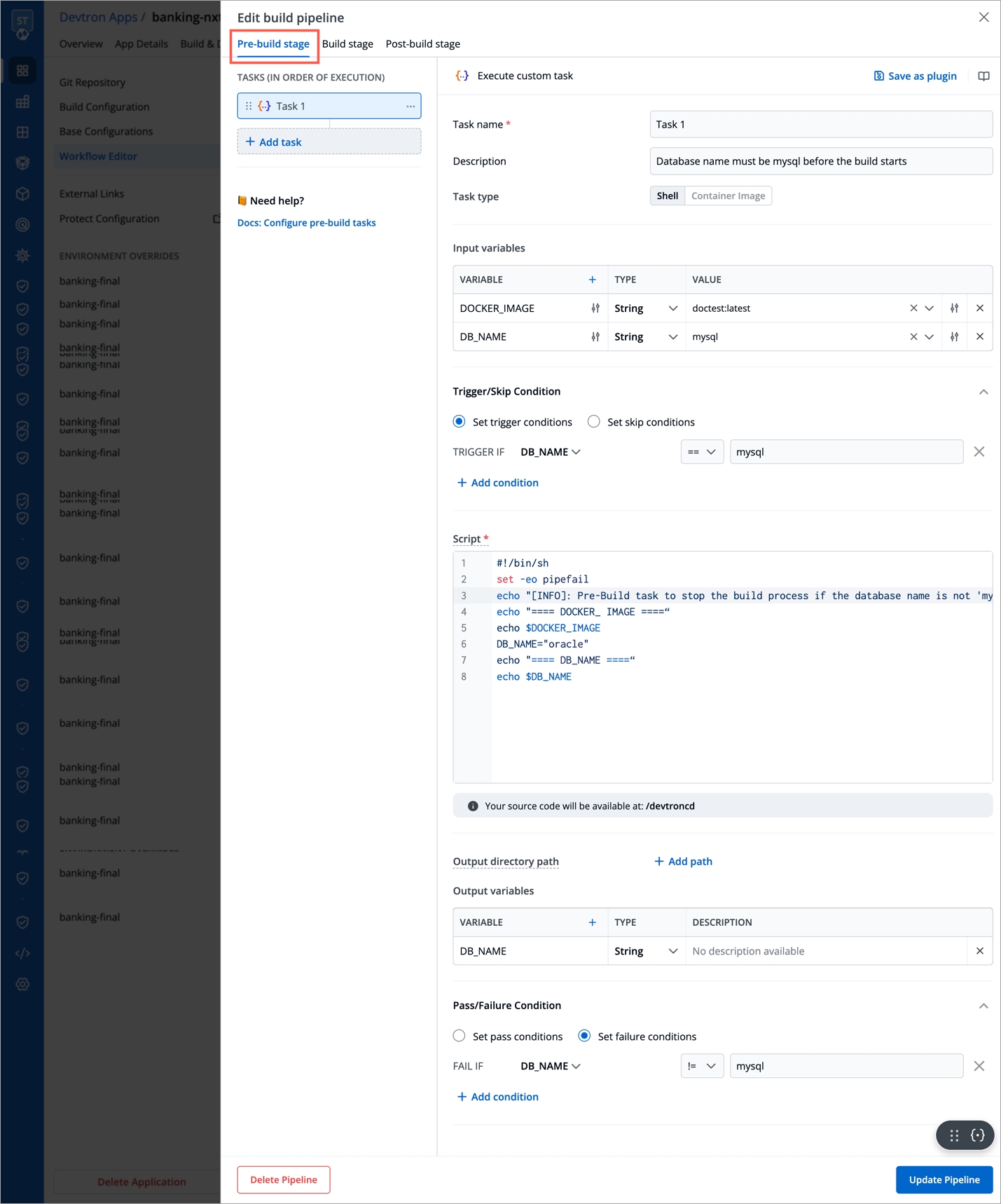

The CI pipeline includes Pre and Post-build steps to validate and introduce checkpoints in the build process.

The pre/post plugins allow you to execute some standard tasks, such as Code analysis, Load testing, Security scanning, and so on. You can build custom pre/post tasks or use one from the standard preset plugins provided by Devtron.

Create a if you haven't done that already!

Each Pre/Post-build stage is executed as a series of events called tasks and includes custom scripts. You could create one or more tasks that are dependent on one another for execution. In other words, the output variable of one task can be used as an input for the next task to build a CI runner.

The tasks will run following the execution order.

The tasks may be re-arranged by using drag-and-drop; however, the order of passing the variables must be followed.

Go to Applications and select your application from the Devtron Apps tabs.

From the App Configuration tab select Workflow Editor.

Select the build pipeline for editing the stages.

Devtron CI pipeline includes the following build stages:

Pre-build stage: The tasks in this stage run before the image is built.

Build stage: In this stage, the build is triggered from the source code that you provide.

Post-build stage: The tasks in this stage are triggered once the build is complete.

You can create a task either by selecting one of the available preset plugins or by creating a custom script.

Prerequisite: Set up Sonarqube, or get the API keys from an admin.

The example shows a Post-build stage with a task created using a preset plugin - Sonarqube.

On the Edit build pipeline screen, select the Post-build stage (or Pre-build).

Select + Add task.

Select Sonarqube from PRESET PLUGINS.

Select Update Pipeline.

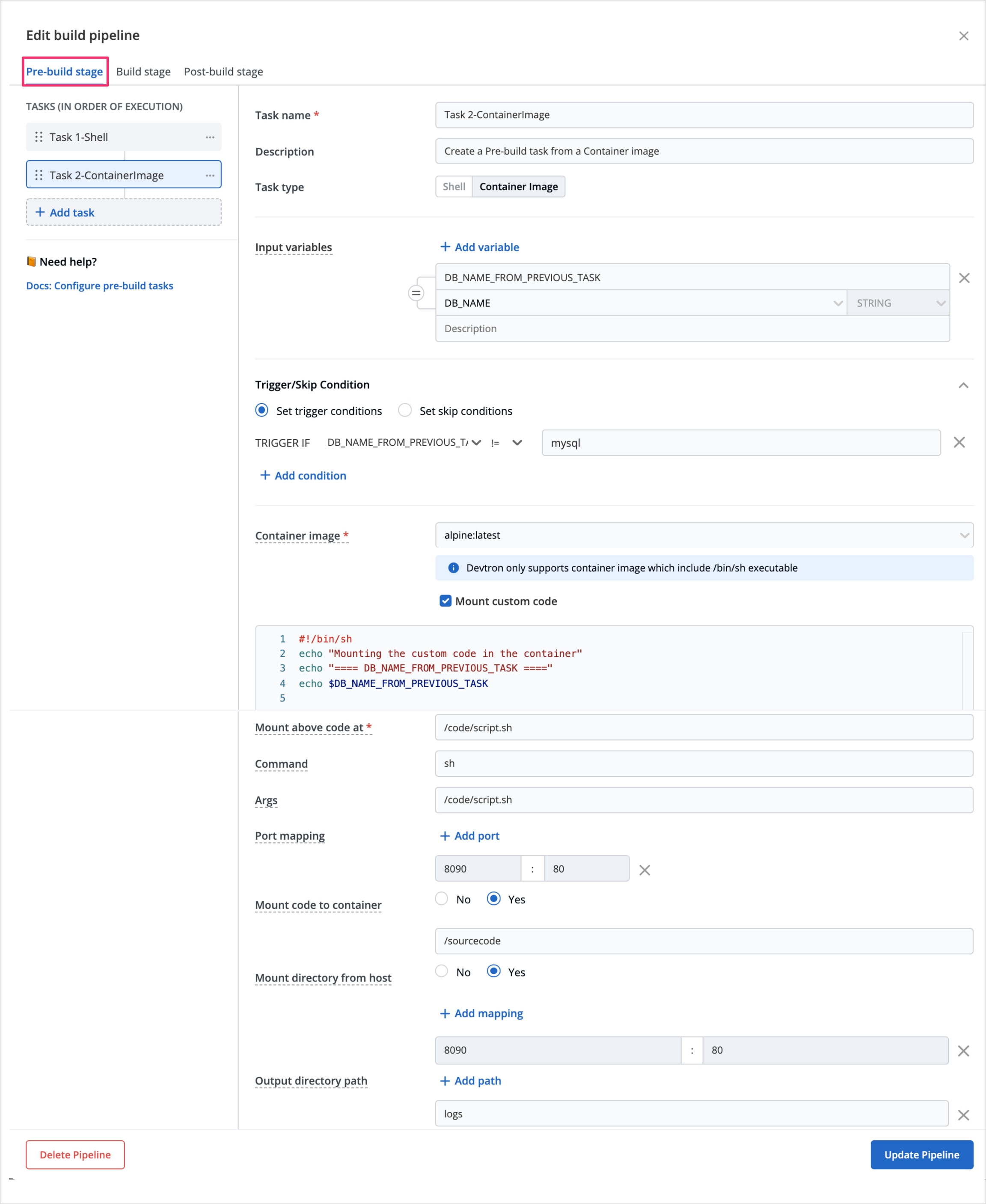

On the Edit build pipeline screen, select the Pre-build stage.

Select + Add task.

Select Execute custom script.

Select the Task type as Shell.

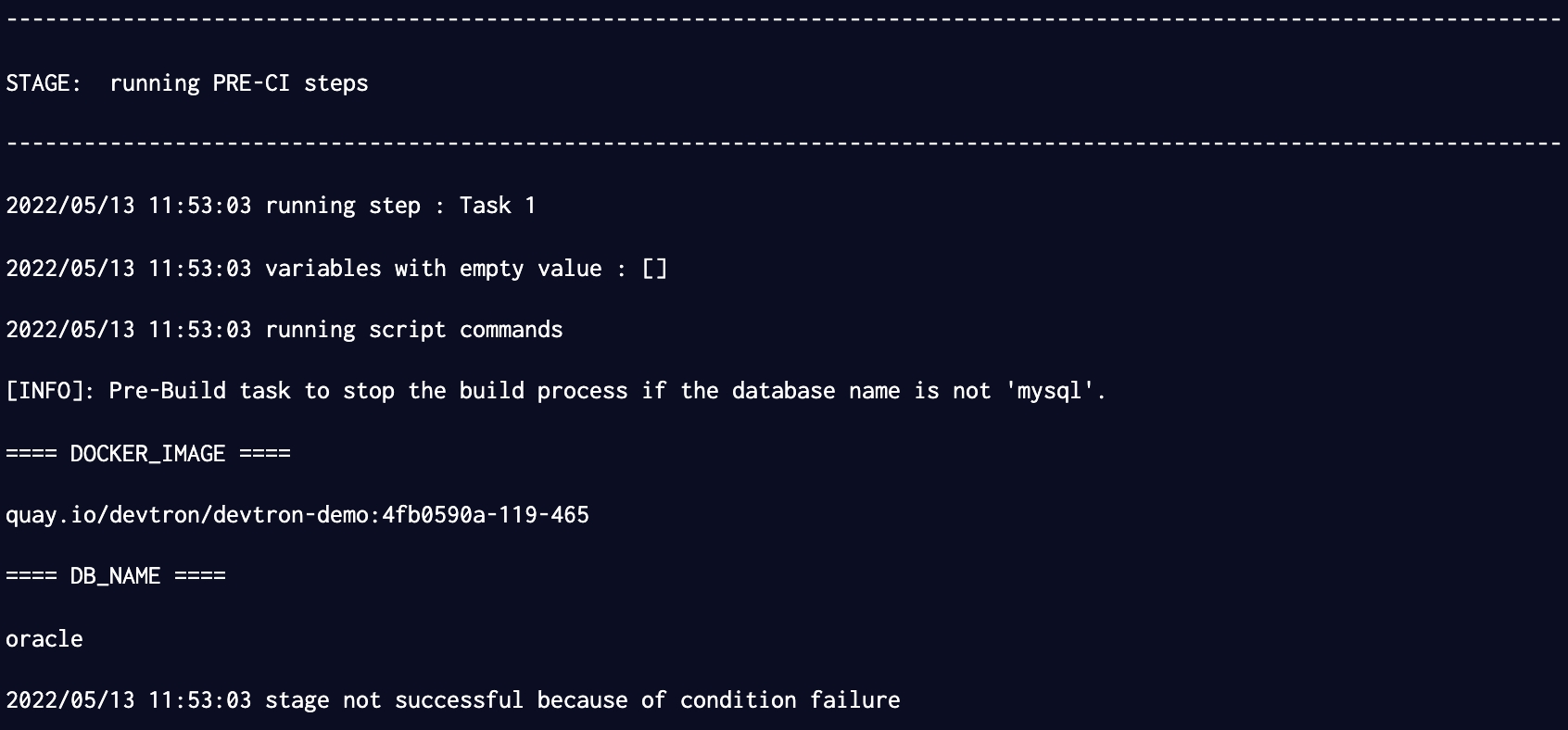

Consider an example that creates a Shell task to stop the build if the database name is not "mysql". The script takes 2 input variables, one is a global variable (DOCKER_IAMGE), and the other is a custom variable (DB_NAME) with a value "mysql". The task triggers only if the database name matches "mysql". If the trigger condition fails, this Pre-build task will be skipped and the build process will start. The variable DB_NAME is declared as an output variable that will be available as an input variable for the next task. The task fails if DB_NAME is not equal to "mysql".

Select Update Pipeline.

Here is a screenshot with the failure message from the task:

Select the Task type as Container image.

This example creates a Pre-build task from a container image. The output variable from the previous task is available as an input variable.

Select Update Pipeline.

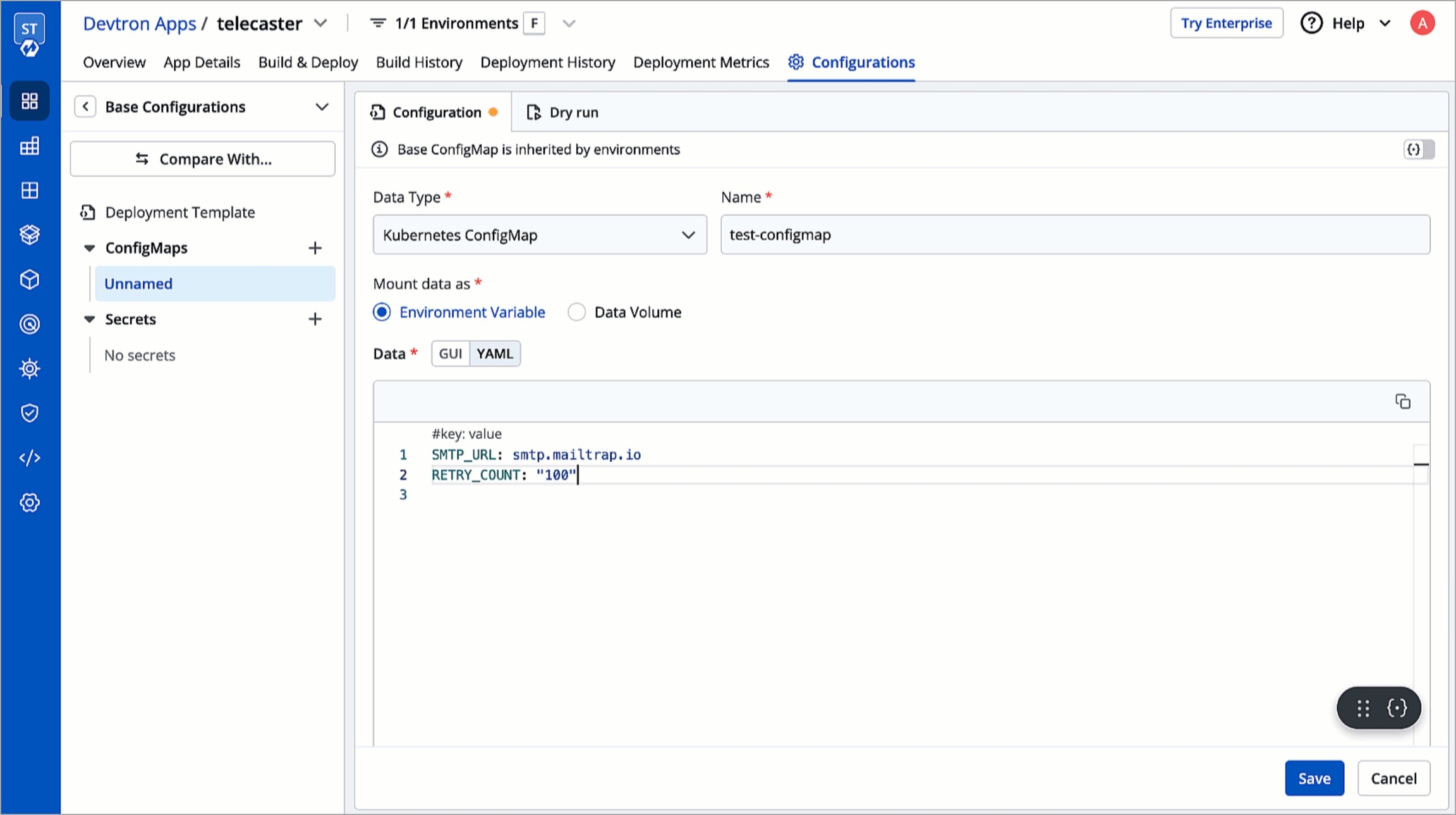

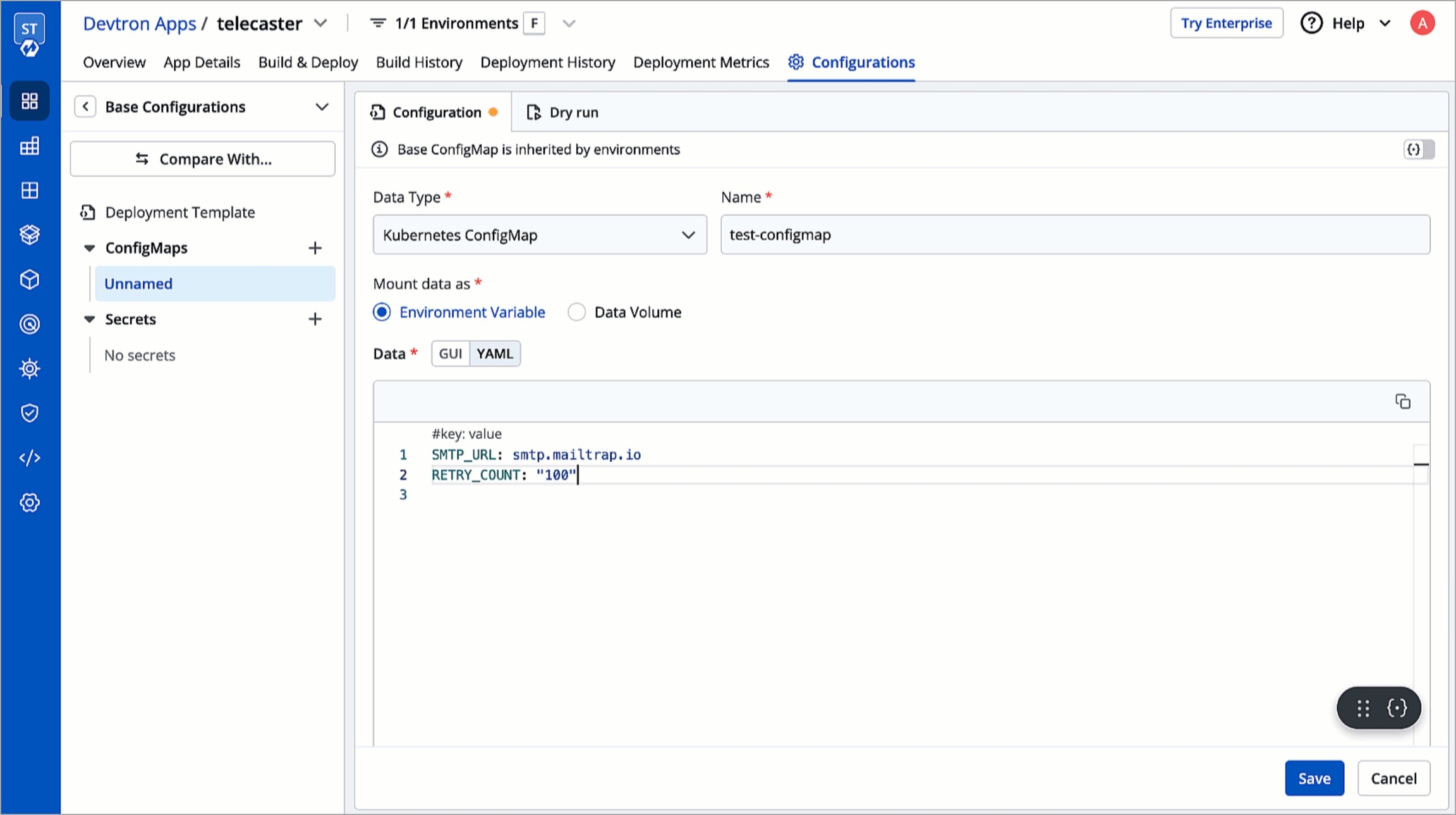

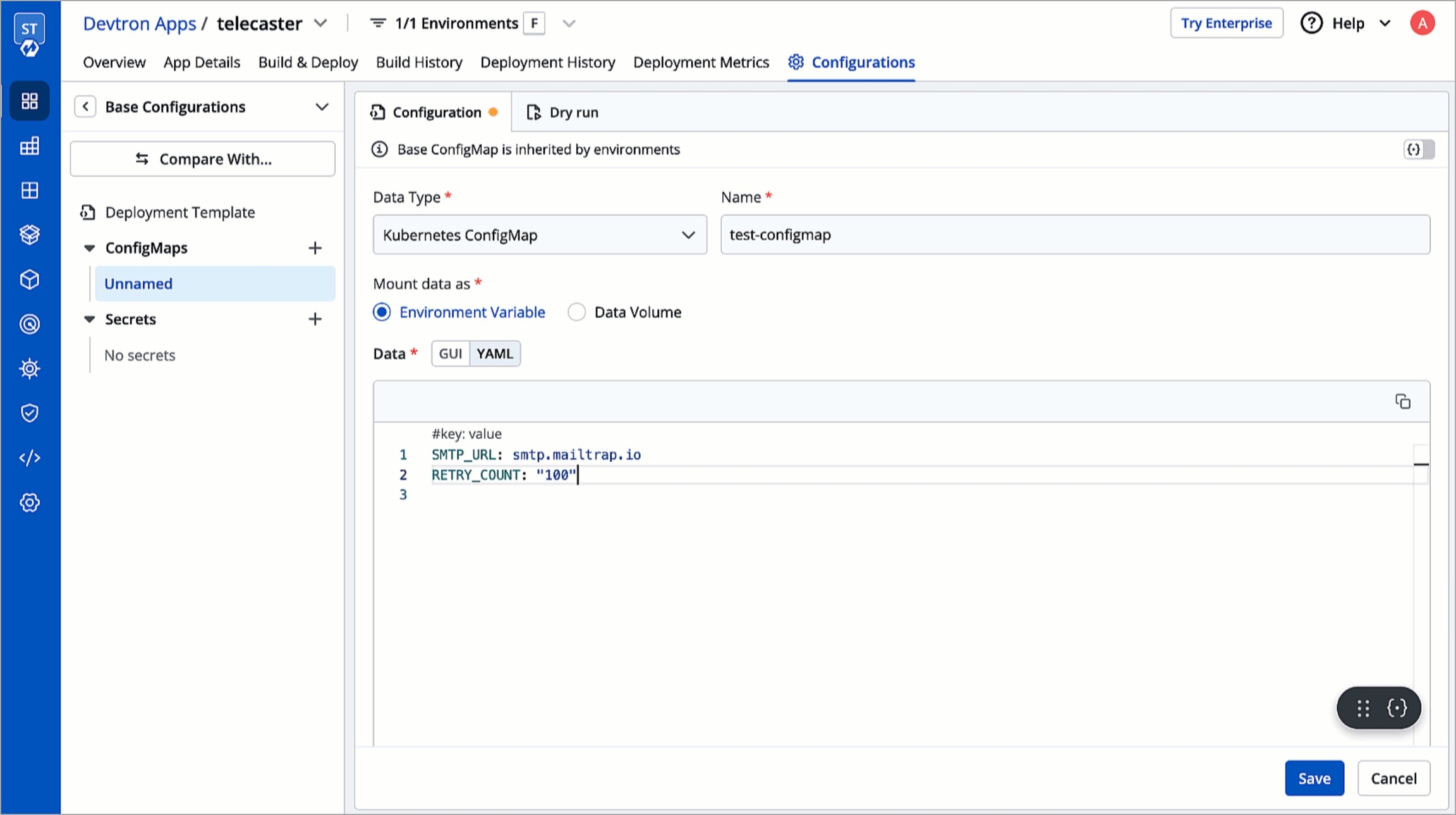

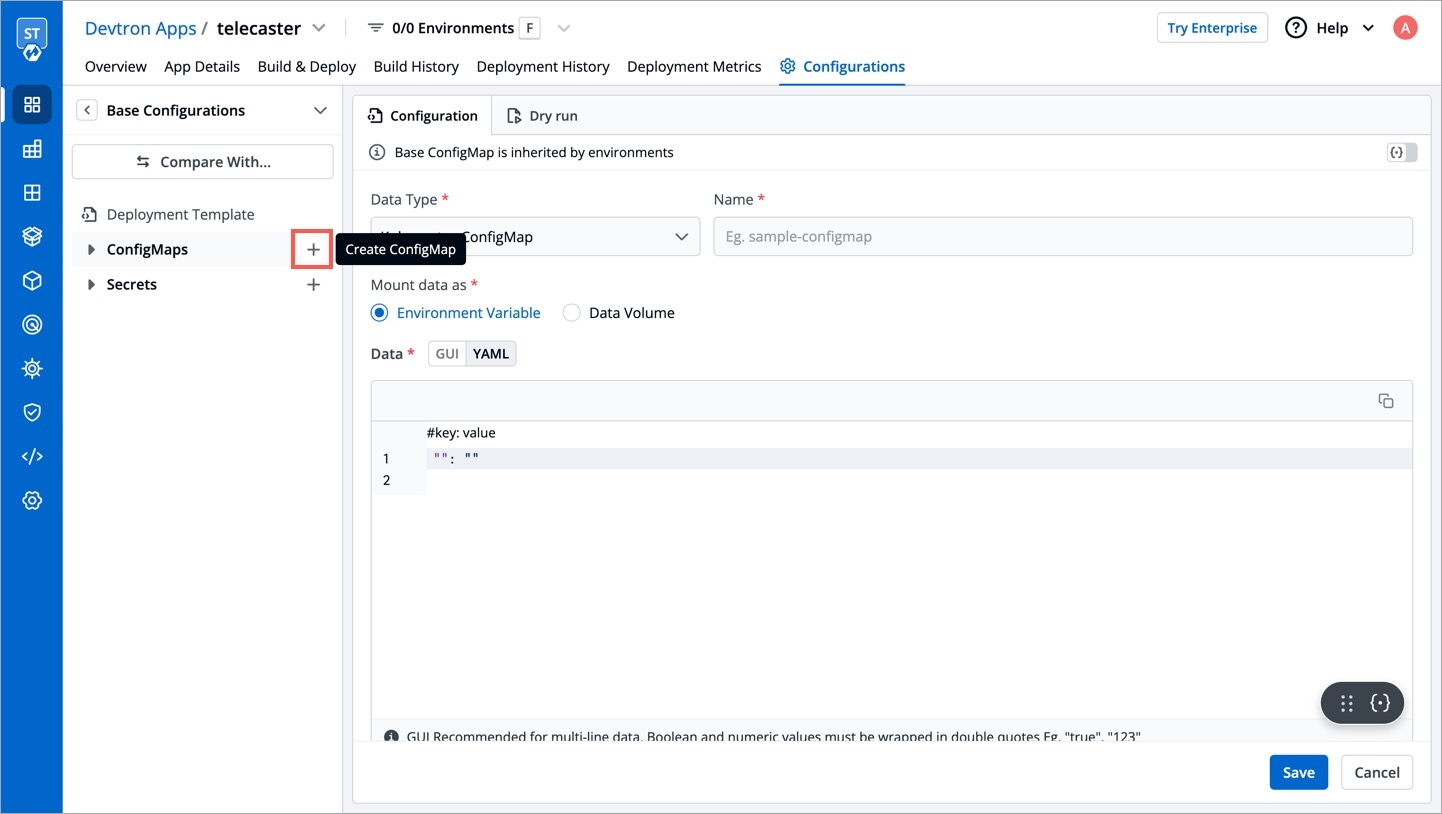

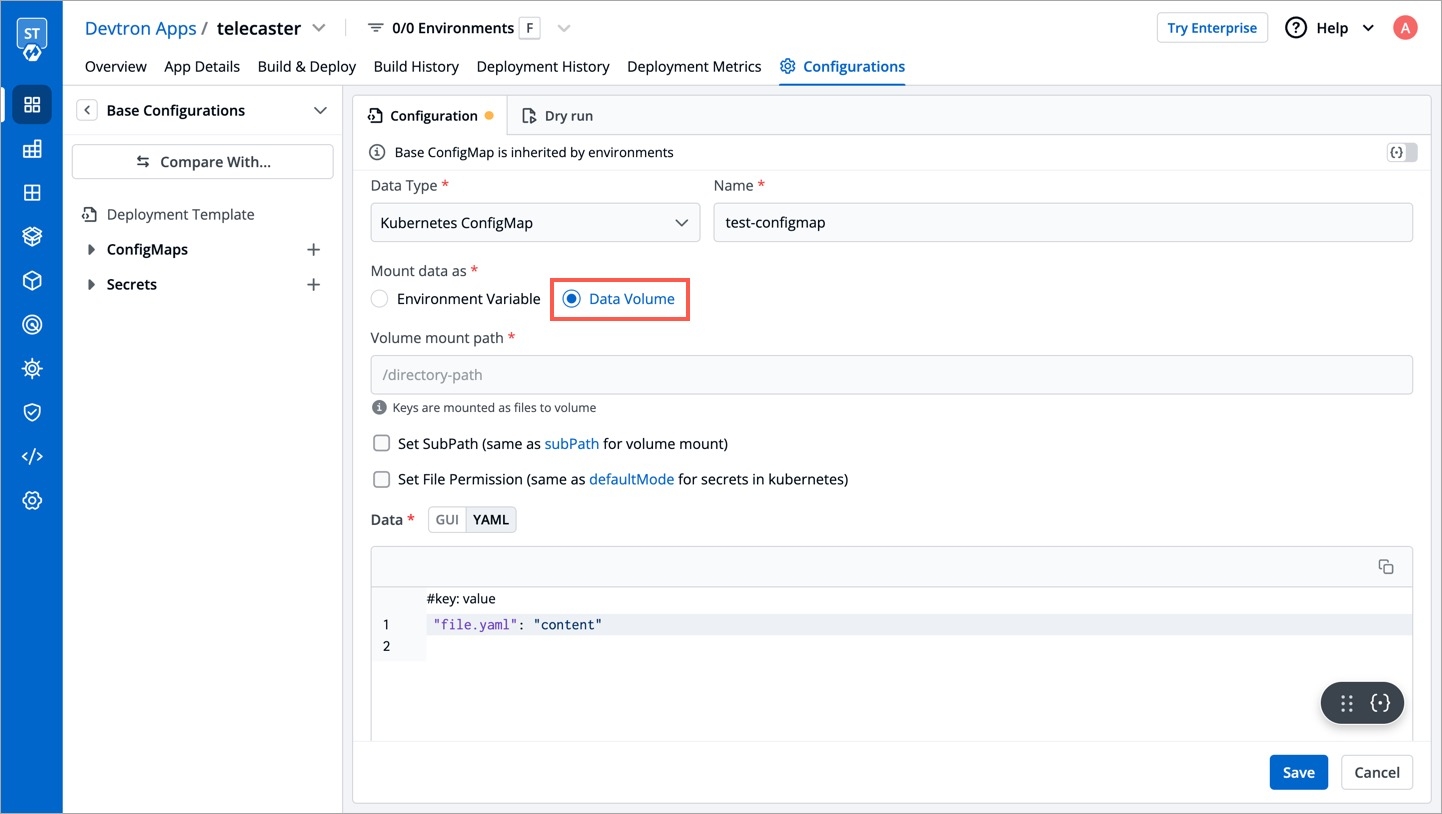

The ConfigMap API resource holds key-value pairs of the configuration data that can be consumed by pods or used to store configuration data for system components such as controllers. ConfigMap is similar to Secrets, but designed to more conveniently support working with strings that do not contain sensitive information.

Click on Add ConfigMap to add a config map to your application.

You can configure a configmap in two ways-

(a) Using data type Kubernetes ConfigMap

(b) Using data type Kubernetes External ConfigMap

1. Data Type

Select the Data Type as Kubernetes ConfigMap, if you wish to use the ConfigMap created by Devtron.

2. Name

Provide a name to your configmap.

3. Use ConfigMap as

Here we are providing two options, one can select any of them as per your requirement

-Environment Variable as part of your configMap or you want to add Data Volume to your container using Config Map.

Environment Variable

Select this option if you want to add Environment Variables as a part of configMap. You can provide Environment Variables in key-value pairs, which can be seen and accessed inside a pod.

Data Volume

Select this option if you want to add a Data Volume to your container using the Config Map.

Key-value pairs that you provide here, are provided as a file to the mount path. Your application will read this file and collect the required data as configured.

4. Data

In the Data section, you provide your configmap in key-value pairs. You can provide one or more than one environment variable.

You can provide variables in two ways-

YAML (raw data)

GUI (more user friendly)

Once you have provided the config, You can click on any option-YAML or GUI to view the key and Value parameters of the ConfigMap.

Kubernetes ConfigMap using Environment Variable:

If you select Environment Variable in 3rd option, then you can provide your environment variables in key-value pairs in the Data section using YAML or GUI.

Data in YAML (please Check below screenshot)

Now, Click on Save ConfigMap to save your configmap configuration.

Kubernetes ConfigMap using Data Volume

Provide the Volume Mount folder path in Volume Mount Path, a path where the data volume needs to be mounted, which will be accessible to the Containers running in a pod.

You can add Configuration data as in YAML or GUI format as explained above.

You can click on YAML or GUI to view the key and Value parameters of the ConfigMap that you have created.

You can click on Save ConfigMap to save the configMap.

For multiple files mount at the same location you need to check sub path bool field, it will use the file name (key) as sub path. Sub Path feature is not applicable in case of external configmap.

File permission will be provide at the configmap level not on the each key of the configmap. It will take 3 digit standard permission for the file.

You can select Kubernetes External ConfigMap in the data type field if you have created a ConfigMap using the kubectl command.

By default, the data type is set to Kubernetes ConfigMap.

Kubernetes External ConfigMap is created using the kubectl create configmap command. If you are using Kubernetes External ConfigMap, make sure you give the name of ConfigMap the same as the name that you have given using kubectl create Configmap <configmap-name> <data source> command, otherwise, it might result in an error during the built.

You have to ensure that the External ConfigMap exists and is available to the pod.

The config map is created.

You can update your configmap anytime later but you cannot change the name of your configmap. If you want to change the name of the configmap then you have to create a new configmap. To update configmap, click on the configmap you have created make changes as required.

Click on Update Configmap to update your configmap.

You can delete your configmap. Click on your configmap and click on the delete sign to delete your configmap.

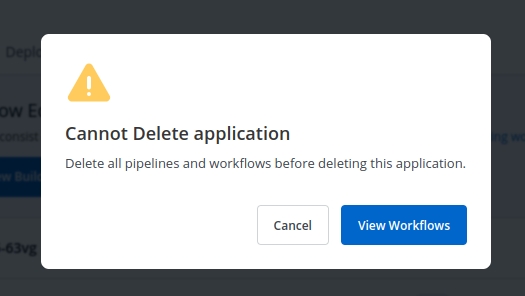

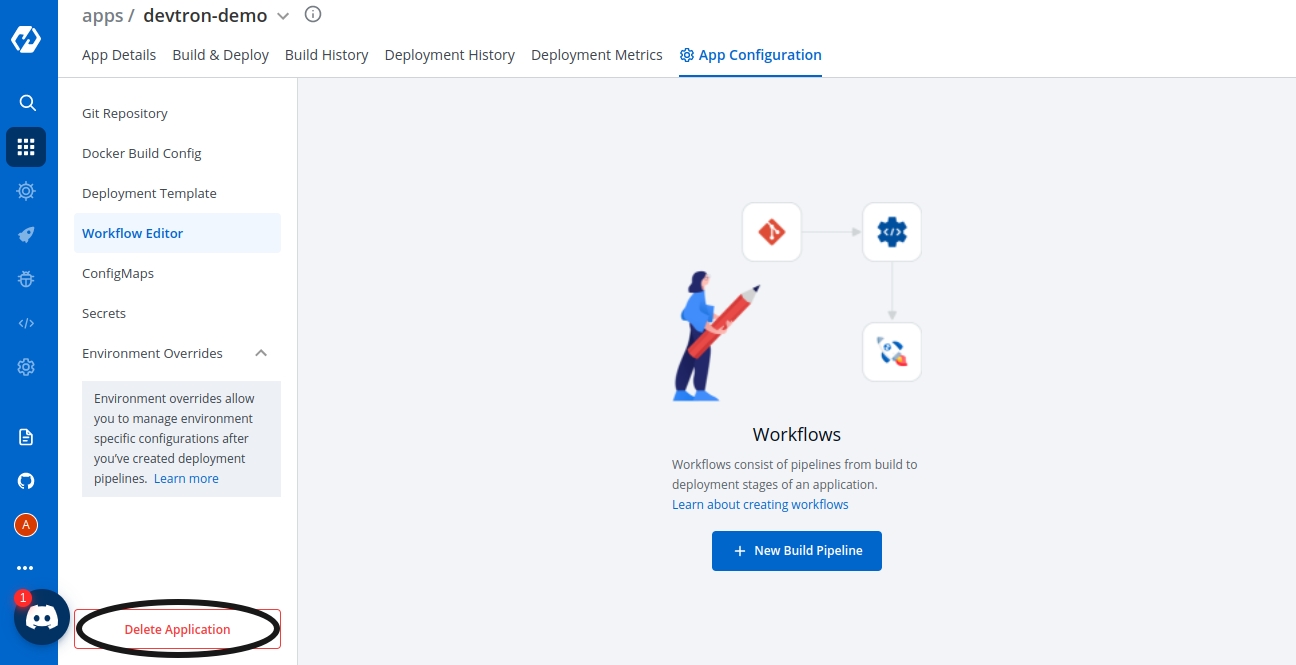

Delete the Application, when you are sure you no longer need it.

Clicking on Delete Application will not delete your application if you have workflows in the application.

If your Application contains workflows in the Workflow Editor. So, when you click on Delete Application, you will see the following prompt.

Click on View Workflows to view and delete your workflows in the application.

To delete the workflows in your application, you must first delete all the pipelines (CD Pipeline, CI Pipeline or Linked CI Pipeline or External CI Pipeline if there are any).

After you have deleted all the pipelines in the workflow, you can delete that particular workflow.

Similarly, delete all the workflows in the application.

Now, Click on Delete Application to delete the application.

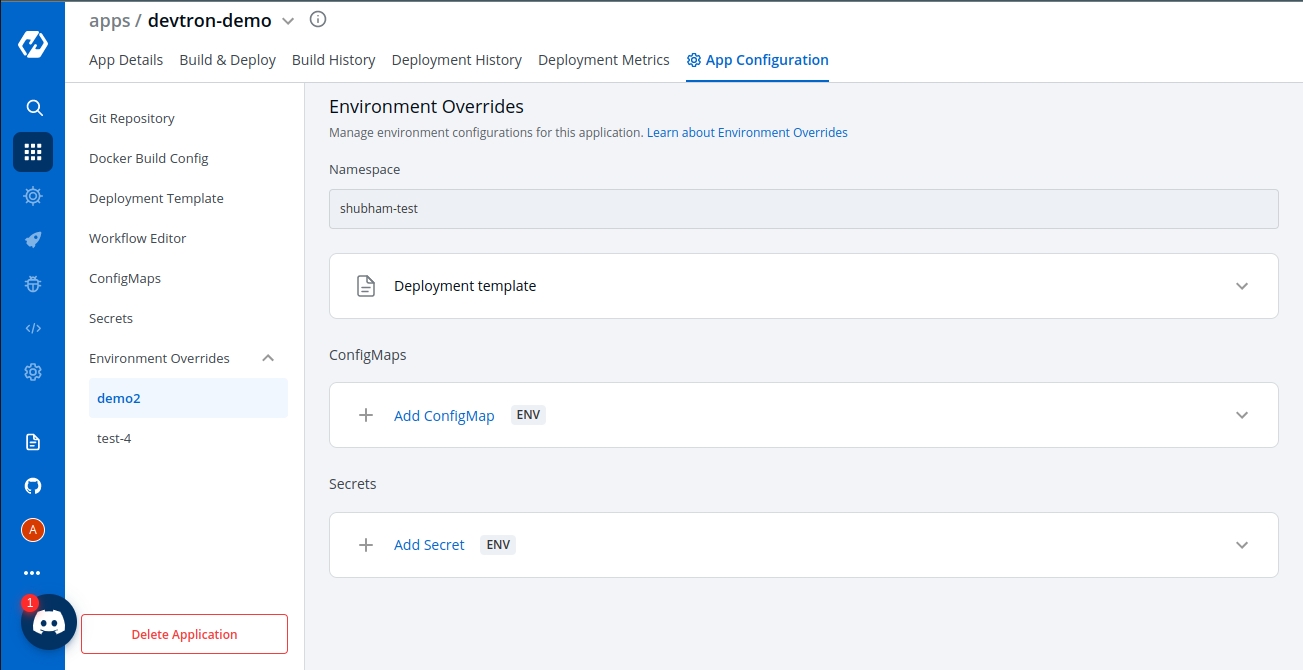

You will see all your environments associated with an application under the Environment Overrides section.

You can customize your Deployment template, ConfigMap, Secrets in Environment Overrides section to add separate customizations for different environments such as dev, test, integration, prod, etc.

If you want to deploy an application in a non-production environment and then in production environment once testing is done in non-prod environment, then you do not need to create a new application for prod environment. Your existing pipeline(non-prod env) will work for both the environments with little customization in your deployment template under Environment overrides.

In a Non-production environment, you may have specified 100m CPU resources in the deployment template but in the Production environment you may want to have 500m CPU resources as the traffic on Pods will be higher than traffic on non-prod env.

Configuring the Deployment template inside Environment Overrides for a specific environment will not affect the other environments because Environment Overrides will configure deployment templates on environment basis. And at the time of deployment, it will always pick the overridden deployment template if any.

If there are no overrides specified for an environment in the Environment Overrides section, the deployment template will be the one you specified in the deployment template section of the app creation.

(Note: This example is meant only for a representational purpose. You can choose to add any customizations you want in your deployment templates in the Environment Overrides tab)

Any changes in the configuration will not be added to the template, instead, it will make a copy of the template and lets you customize it for each particular environment. And now this overridden template will be used only for the specified Environment.

This will save you the trouble to manually create deployment files separately for each environment. Instead, all you have to do is to change the required variables in the deployment template.

In the Environment Overrides section, click on Allow Override and make changes to your Deployment template and click on Save to save your changes of the Deployment template.

The same goes for ConfigMap and Secrets. You can also create an environment-specific configmap and Secrets inside the Environment override section.

Click on Update ConfigMap to update Configmaps.

Click on Update Secrets to update Secrets.

Once you are done creating your CI pipeline, you can move start building your CD pipeline. Devtron enables you to design your CD pipeline in a way that fully automates your deployments.

Click on “+” sign on CI Pipeline to attach a CD Pipeline to it. A basic Create deployment modal will pop up.

This section expects two inputs:

Select Environment

Deployment Strategy

This section further including two inputs:

(a) Deploy to Environment

Select the environment where you want to deploy your application.

(b) Namespace

This field will be automatically populated with the Namespace corresponding to the Environment selected in the previous step.

Click on Create Pipeline to create a CD pipeline.

One can have a single CD pipeline or multiple CD pipelines connected to the same CI Pipeline. Each CD pipeline corresponds to only one environment, or in other words, any single environment of an application can have only one CD pipeline. So, the images created by the CI pipeline can be deployed into multiple environments through different CD pipelines originating from a single CI pipeline. If you already have one CD pipeline and want to add more, you can add them by clicking on the

+sign and then choosing the environment in which you want to deploy your application. Once a new CD Pipeline is created for the environment of your choosing, you can move ahead and configure the CD pipeline as required. Your CD pipeline can be configured for the pre-deployment stage, the deployment stage, and the post-deployment stage. You can also select the deployment strategy of your choice. You can add your configurations as explained below:

To configure the advance CD option click on Advance Options at the bottom.

Pipeline name will be autogenerated.

As we discussed above, Select the environment where you want to deploy your application. Once you select the environment, it will display the Namespace corresponding to your selected environment automatically.

There are 3 dropdowns given below:

Pre-deployment stage

Deployment stage

Post-deployment stage

Sometimes one has a requirement where certain actions like DB migration are to be executed before deployment, the Pre-deployment stage should be used to configure these actions.

Pre-deployment stages can be configured to be executed automatically or manually.

If you select automatic, Pre-deployment Stage will be triggered automatically after the CI pipeline gets executed and before the CD pipeline starts executing itself. But, if you select a manual, then you have to trigger your stage via console.

If you want to use some configuration files and secrets in pre-deployment stages or post-deployment stages, then you can use the Config Maps & Secrets options.

Config Maps can be used to define configuration files. And Secrets can be defined to store the private data of your application.

Once you are done defining Config Maps & Secrets, you will get them as a drop-down in the pre-deployment stage and you can select them as part of your pre-deployment stage.

These Pre-deployment CD / Post-deployment CD pods can be created in your deployment cluster or the devtron build cluster. It is recommended that you run these pods in the Deployment cluster so that your scripts (if there are any) can interact with the cluster services that may not be publicly exposed.

If you want to run it inside your application, then you have to check the Execute in application Environment option else leave it unchecked to run it within the Devtron build cluster.

Make sure your cluster has devtron-agent installed if you check the Execute in the application Environment option.

(a) Deploy to Environment

Select the environment where you want to deploy your application. Once you select the environment, it will display the Namespace corresponding to your selected environment automatically.

(b)We support two methods of deployments - Manual and Automatic. If you choose automatic, it will trigger your CD pipeline automatically once the corresponding CI pipeline has been executed successfully.

If you have defined pre-deployment stages, then the CD Pipeline will be triggered automatically after the successful execution of your CI pipeline followed by the successful execution of your pre-deployment stages. But if you choose the manual option, then you have to trigger your deployment manually via console.

(c) Deployment Strategy

Devtron's tool has 4 types of deployment strategies. Click on Add Deployment strategy and select from the available options:

(a) Recreate

(b) Canary

(c) Blue Green

(d) Rolling

If you want to Configure actions like Jira ticket close, that you want to run after the deployment, you can configure such actions in the post-deployment stages.

Post-deployment stages are similar to pre-deployment stages. The difference is, pre-deployment executes before the CD pipeline execution and post-deployment executes after the CD pipeline execution. The configuration of post-deployment stages is similar to the pre-deployment stages.

You can use Config Map and Secrets in post deployments as well, as defined in the Pre-Deployment stages.

Once you have configured the CD pipeline, click on Create Pipeline to save it. You can see your newly created CD Pipeline on the Workflow tab attached to the corresponding CI Pipeline.

You can update the deployment stages and the deployment strategy of the CD Pipeline whenever you require it. But, you cannot change the name of a CD Pipeline or its Deployment Environment. If you need to change such configurations, you need to make another CD Pipeline from scratch.

To Update a CD Pipeline, go to the App Configurations section, Click on Workflow editor and then click on the CD Pipeline you want to Update.

Make changes as needed and click on Update Pipeline to update this CD Pipeline.

If you no longer require the CD Pipeline, you can also Delete the Pipeline.

To Delete a CD Pipeline, go to the App Configurations and then click on the Workflow editor. Now click on the pipeline you want to delete. A pop will be displayed with CD details. Verify the name and the details to ensure that you are not accidentally deleting the wrong CD pipeline and then click on the Delete Pipeline option to delete the CD Pipeline.

A deployment strategy is a way to make changes to an application, without downtime in a way that the user barely notices the changes. There are different types of deployment strategies like Blue/green Strategy, Rolling Strategy, Canary Strategy, Recreate Strategy. These deployment configuration-based strategies are discussed in this section.

Blue Green Strategy

Blue-green deployments involve running two versions of an application at the same time and moving traffic from the in-production version (the green version) to the newer version (the blue version).

Rolling Strategy

A rolling deployment slowly replaces instances of the previous version of an application with instances of the new version of the application. Rolling deployment typically waits for new pods to become ready via a readiness check before scaling down the old components. If a significant issue occurs, the rolling deployment can be aborted.

Canary Strategy

Canary deployments are a pattern for rolling out releases to a subset of users or servers. The idea is to first deploy the change to a small subset of servers, test it, and then roll the change out to the rest of the servers. The canary deployment serves as an early warning indicator with less impact on downtime: if the canary deployment fails, the rest of the servers aren't impacted.

Recreate

The recreate strategy is a dummy deployment that consists of shutting down version A then deploying version B after version A is turned off. A recreate deployment incurs downtime because, for a brief period, no instances of your application are running. However, your old code and new code do not run at the same time.

It terminates the old version and releases the new one.

Devtron now supports attaching multiple deployment pipelines to a single build pipeline, in its workflow editor. This feature lets you deploy an image first to stage, run tests and then deploy the same image to production.

Please follow the steps mentioned below to create sequential pipelines :

After creating CI/build pipeline, create a CD pipeline by clicking on the + sign on CI pipeline and configure the CD pipeline as per your requirements.

To add another CD Pipeline sequentially after previous one, again click on + sign on the last CD pipeline.

Similarly, you can add multiple CD pipelines by clicking + sign of the last CD pipeline, each deploying in different environments.

Note: Deleting a CD pipeline also deletes all the K8s resources associated with it and will bring a disruption in the deployed micro-service. Before deleting a CD pipeline, please ensure that the associated resources are not being used in any production workload.

CI Pipeline can be created in three different ways, , and .

Each of these methods have different use-cases which can be used according to the needs of the organization. Let’s begin with Continuous Integration.

Click on Continuous Integration, a prompt comes up in which we need to provide our custom configurations. Below is the description of some configurations which are required.

[Note] Options such as pipeline execution, stages and scan for vulnerabilities, will be visible after clicking on advanced options present in the bottom left corner.

Pipeline name is an auto-generated name which can also be renamed by clicking on Advanced options.

You can select the method you want to execute the pipeline. By default the value is automatic. In this case it will get automatically triggered if any changes are made to the respective git repository. You can set it to manual if you want to trigger the pipeline manually.

In source type, we can observe that we have three types of mechanisms which can be used for building your CI Pipeline. In the drop-down you can observe we have Branch Fixed, Pull Request and Tag Creation.

If you select the Branch Fixed as your source type for building CI Pipeline, then you need to provide the corresponding Branch Name.

Branch Name is the name of the corresponding branch (eg. main or master, or any other branch)

If you select the Pull Request option, you can configure the CI Pipeline using the generated PR. For this mechanism you need to configure a webhook for the repository added in the Git Material.

Prerequisites for Pull Request

If using GitHub - To use this mechanism, as stated above you need to create a webhook for the corresponding repository of your Git Provider. In Github to create a webhook for the repository -

Go to settings of that particular repository

Click on webhook section under options tab

In the Payload URL section, please copy paste the Webhook URL which can be found at Devtron Dashboard when you select source type as Pull Request as seen in above image.

Change content type to - application/json

Copy paste the Secret as well from the Dashboard when you select the source type as Pull Request

Now, scroll down and select the custom events for which you want to trigger the webhook to build CI Pipeline -

Check the radio button for Let me select individual events

Then, check the Branch or Tag Creation and Pull Request radio buttons under the individual events as mentioned in image below.

[Note] If you select Branch or Tag Creation, it will work for the Tag Creation mechanism as well.

After selecting the respective options, click on the generate the webhook button to create a webhook for your respective repository.

If using Bitbucket Cloud - If you are using Bitbucket cloud as your git provider, you need to create a webhook for that as we created for Github in the above section. Follow the steps to create webhook -

Go to Repository Settings on left sidebar of repository window

Click on Webhooks and then click on Add webhook as shown in the image.

Give any appropriate title as per your choice and then copy-paste the url which you can get from Devtron Dashboard when you select Pull Request as source type in case of Bitbucket Cloud as Git Host.

Check the Pull Request events for which you want to trigger the webhook and then save the configurations.

Filters

Now, coming back to the Pull Request mechanism, you can observe we have the option to add filters. In a single repository we have multiple PRs generated, so to have the exact PR for which you want to build the CI Pipeline, we have this feature of filters.

You can add a few filters which can be seen in the dropdown to sort the exact PR which you want to use for building the pipeline.

Below are the details of different filters which you can use as per your requirement. Please select any of the filters and pass the value in regex format as one has already given for example and then click on Create Pipeline.

The third option i.e, Tag Creation. In this mechanism you need to provide the tag name or author to specify the exact tag for which you want to build the CI Pipeline. To work with this feature as well, you need to configure the webhook for either Github or Bitbucket as we did in the previous mechanism i.e, Pull Request.

In this process as well you can find the option to filter the specific tags with certain filter parameters. Select the appropriate filter as per your requirement and pass the value in form of regex, one of the examples is already given.

Select the appropriate filter and pass the value in the form of regex and then click on Create Pipeline.

When you click on the advanced options button which can be seen at the bottom-left of the screen, you can see some more configuration options which includes pipeline execution, stages and scan for vulnerabilities.

There are 3 dropdowns given below:

Pre-build

Docker build

Post-build

(a) Pre-build

This section is used for those steps which you want to execute before building the Docker image. To add a Pre-build stage, click on Add Stage and provide a name to your pre-stage and write your script as per your requirement. These stages will run in sequence before the docker image is built. Optionally, you can also provide the path of the directory where the output of the script will be stored locally.

You can add one or more than one stage in a CI Pipeline.

(b) Docker build

Though we have the option available in the Docker build configuration section to add arguments in key-value pairs for the docker build image. But one can also provide docker build arguments here as well. This is useful, in case you want to override them or want to add new arguments to build your docker image.

(c) Post-build

The post-build stage is similar to the pre-build stage. The difference between the post-build stage and the pre-build stage is that the post-build will run when your CI pipeline will be executed successfully.

Adding a post-build stage is similar to adding a pre-build stage. Click on Add Stage and provide a name to your post-stage. Here you can write your script as per your requirement, which will run in sequence after the docker image is built. You can also provide the path of the directory in which the output of the script will be stored in the Remote Directory column. And this is optional to fill because many times you run scripts that do not provide any output.

NOTE:

(a) You can provide pre-build and post-build stages via the Devtron tool’s console or can also provide these details by creating a file devtron-ci.yaml inside your repository. There is a pre-defined format to write this file. And we will run these stages using this YAML file. You can also provide some stages on the Devtron tool’s console and some stages in the devtron-ci.yaml file. But stages defined through the Devtron dashboard are first executed then the stages defined in the devtron-ci.yaml file.

(b) The total timeout for the execution of the CI pipeline is by default set as 3600 seconds. This default timeout is configurable according to the use-case. The timeout can be edited in the configmap of the orchestrator service in the env variable env:"DEFAULT_TIMEOUT" envDefault:"3600"

Scan for vulnerabilities adds a security feature to your application. If you enable this option, your code will be scanned for any vulnerabilities present in your code. And you will be informed about these vulnerabilities. For more details please check doc

You have provided all the details required to create a CI pipeline, now click on Create Pipeline.

You can also update any configuration of an already created CI Pipeline, except the pipeline name. The pipeline name can not be edited.

Click on your CI pipeline, to update your CI Pipeline. A window will be popped up with all the details of the current pipeline.

Make your changes and click on Update Pipeline at the bottom to update your Pipeline.

You can only delete CI pipeline if you have no CD pipeline created in your workflow.

To delete a CI pipeline, go to the App Configurations and then click on Workflow editor

Click on Delete Pipeline at the bottom to delete the CD pipeline

The test cases given in the script will run before the test cases given in the devtron.ci.yaml

If one code is shared across multiple applications, Linked CI Pipeline can be used, and only one image will be built for multiple applications because if there is only one build, it is not advisable to create multiple CI Pipelines.

To create a Linked CI Pipeline, please follow the steps mentioned below :

Click on + New Build Pipeline button.

Select Linked CI Pipeline.

Select the application in which the source CI pipeline is present.

Select the source CI pipeline.

Provide a name for linked CI pipeline.

Click on Create Linked CI Pipeline button to create linked CI pipeline.

After creating a linked CI pipeline, you can create a CD pipeline. You cannot trigger build from linked CI pipeline, it can be triggered only from source CI pipeline. Initially you will not see any images to deploy in CD pipeline created from linked CI pipeline. Trigger build in source CI pipeline to see the images in CD pipeline of linked CI pipeline. After this, whenever you trigger buld in source CI pipeline, the build images will be listed in CD pipeline of linked CI pipeline too.

You can use Devtron for deployments on Kubernetes while using your own CI tool such as Jenkins. External CI features can be used for cases where the CI tool is hosted outside the Devtron platform.

You can send the ‘Payload script’ to your CI tools such as Jenkins and Devtron will receive the build image every time the CI Service is triggered or you can use the Webhook URL which will build an image every time CI Service is triggered using Devtron Dashboard.

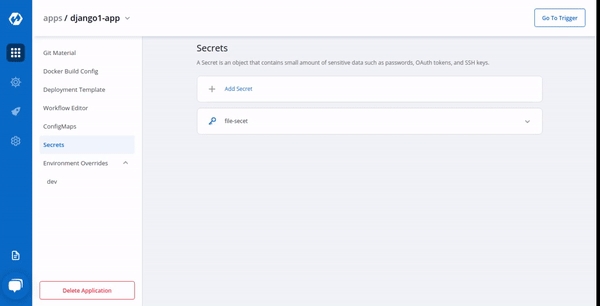

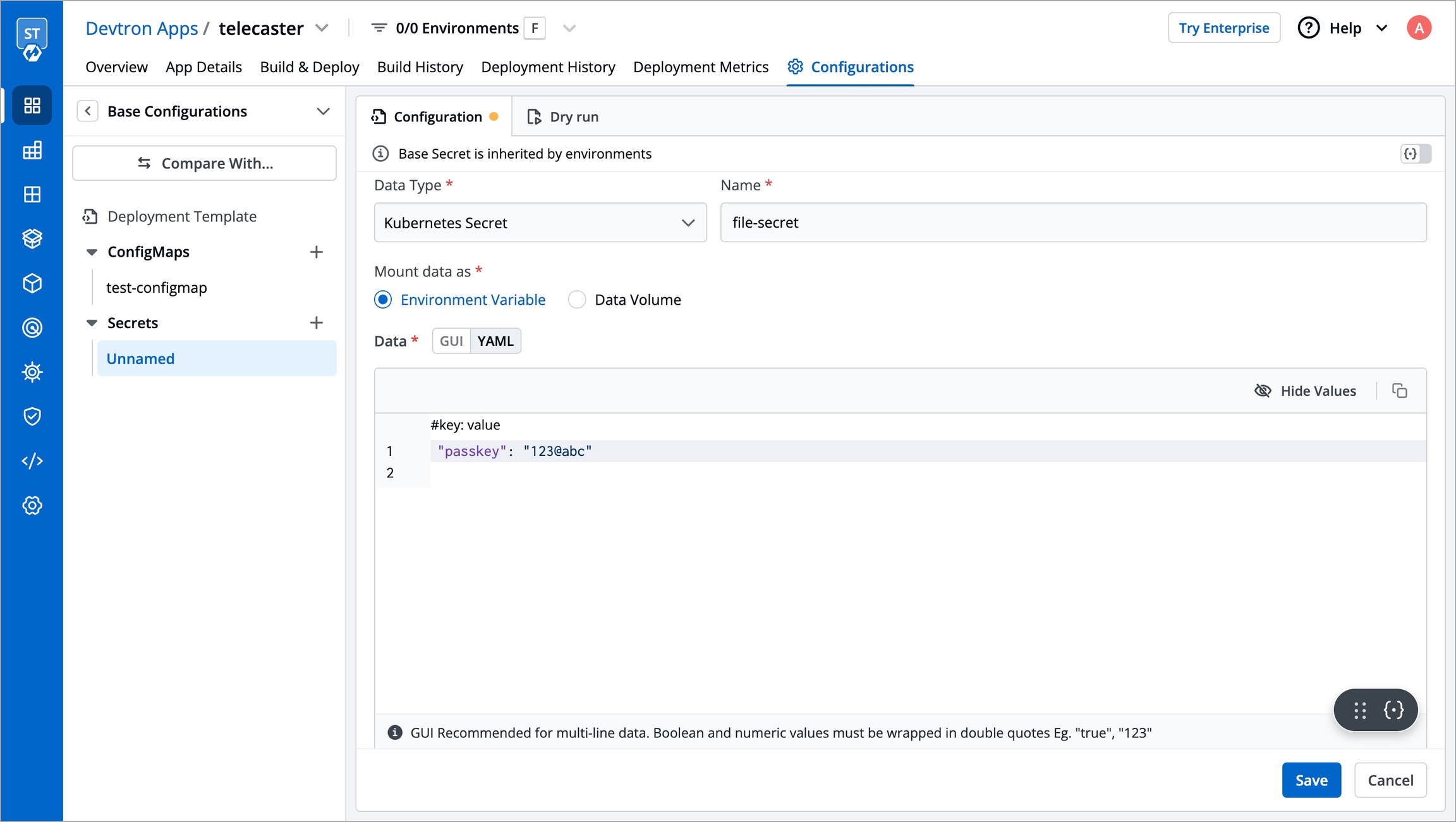

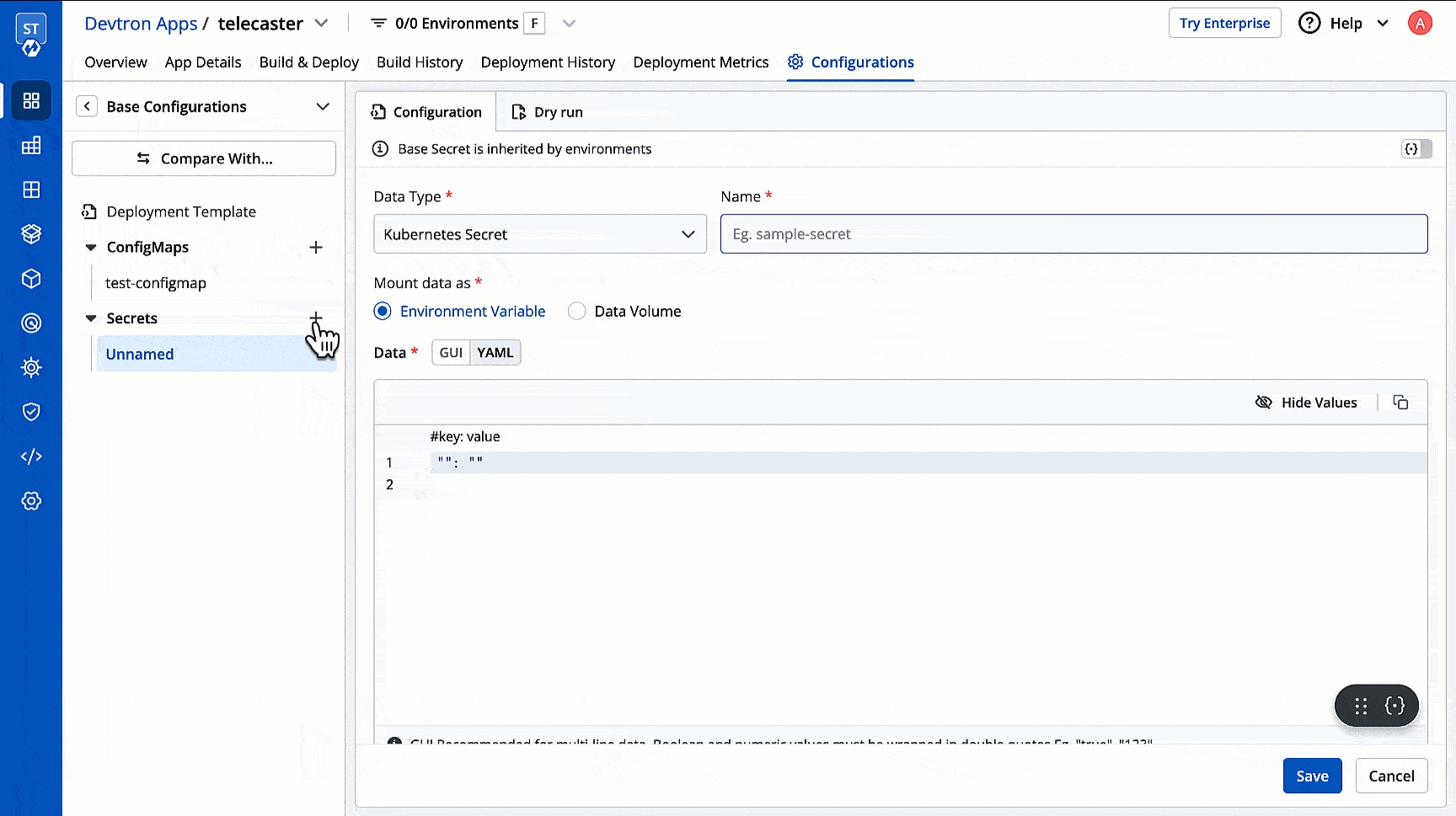

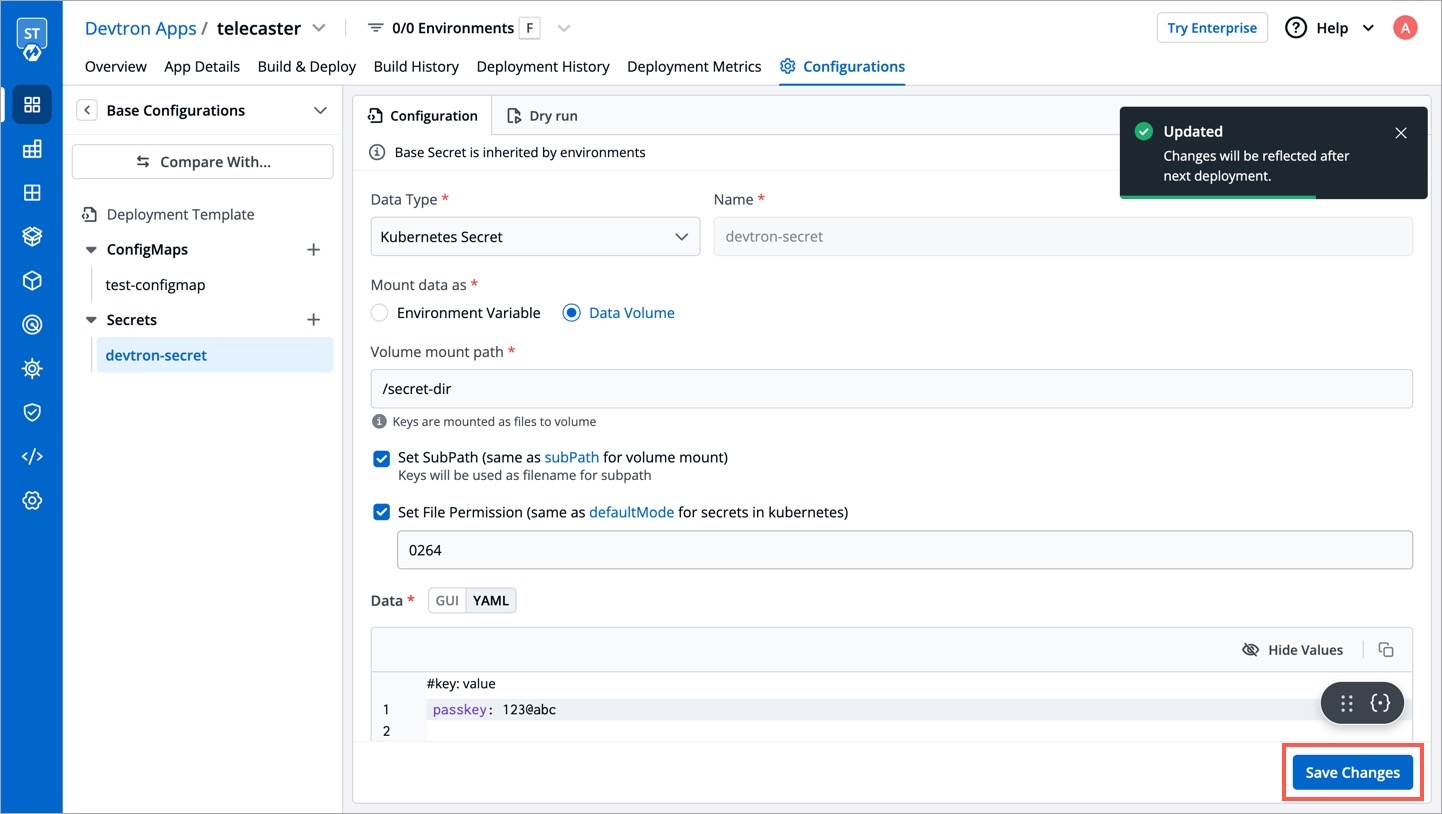

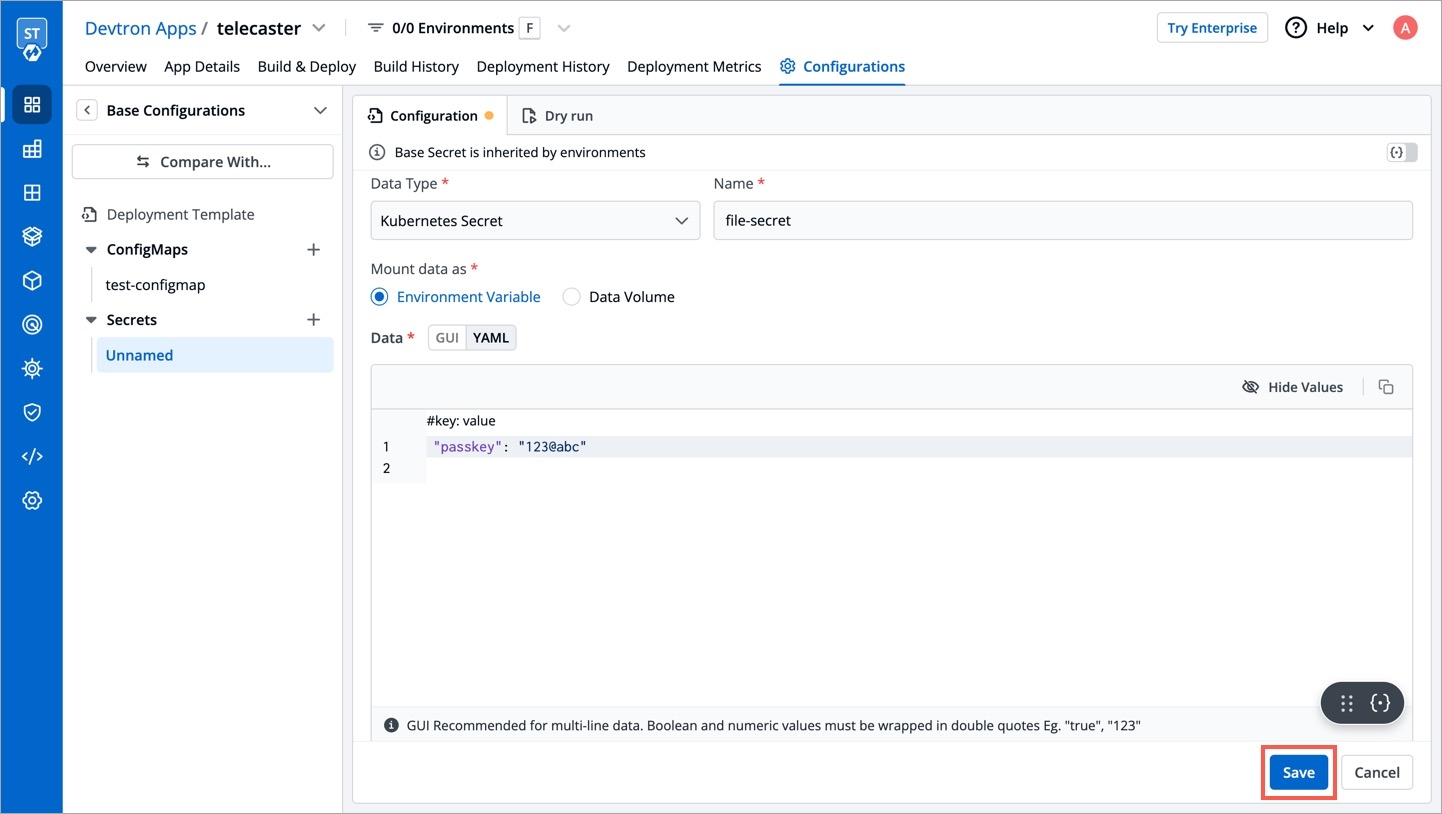

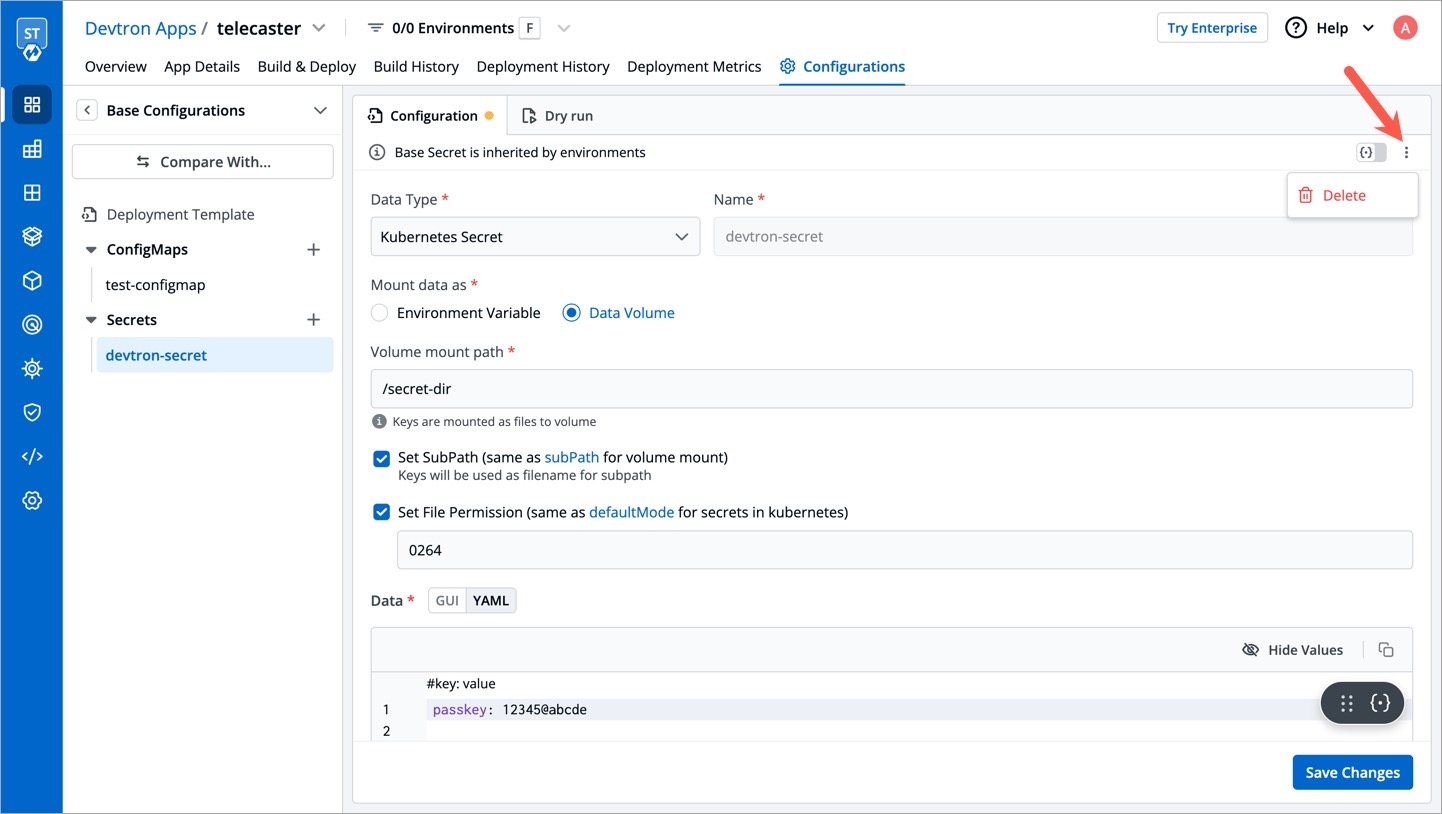

Secrets and configmaps both are used to store environment variables but there is one major difference between them: Configmap stores key-values in normal text format while secrets store them in base64 encrypted form. Devtron hides the data of secrets for the normal users and it is only visible to the users having edit permission.

Secret objects let you store and manage sensitive information, such as passwords, authentication tokens, and ssh keys. Embedding this information in secrets is safer and more flexible than putting it verbatim in a Pod definition or in a container image.

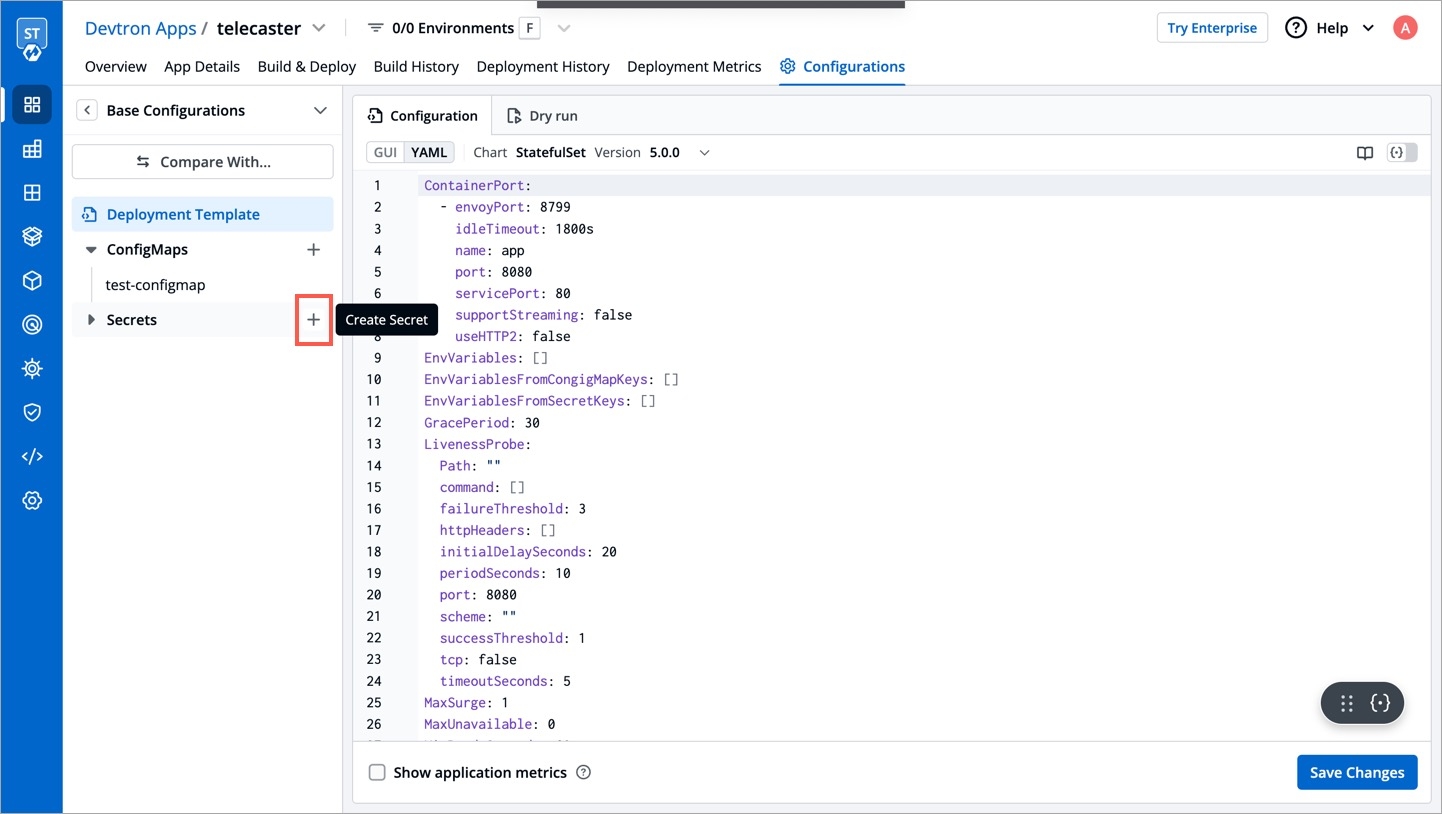

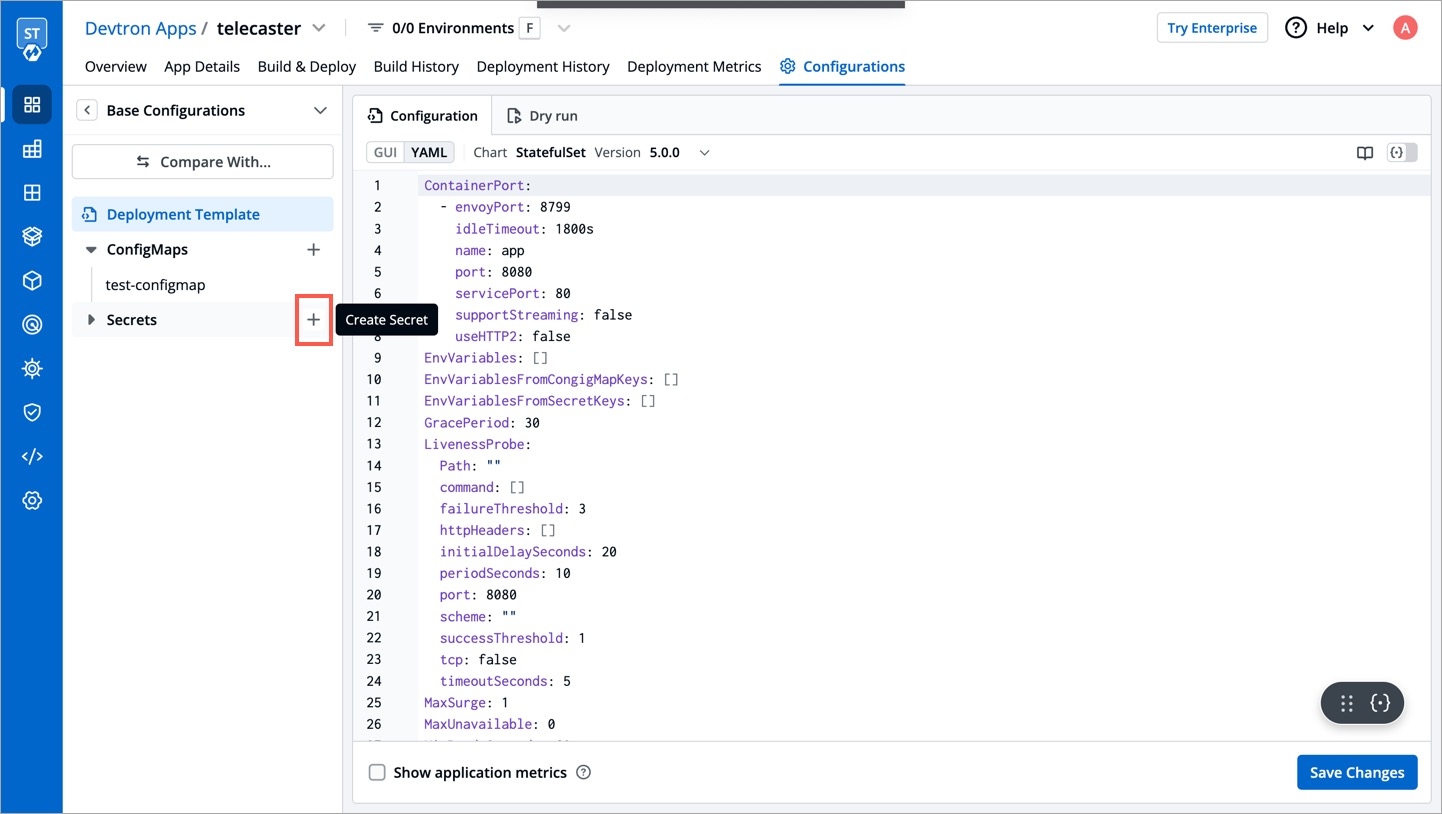

Click on Add Secret to add a new secret.

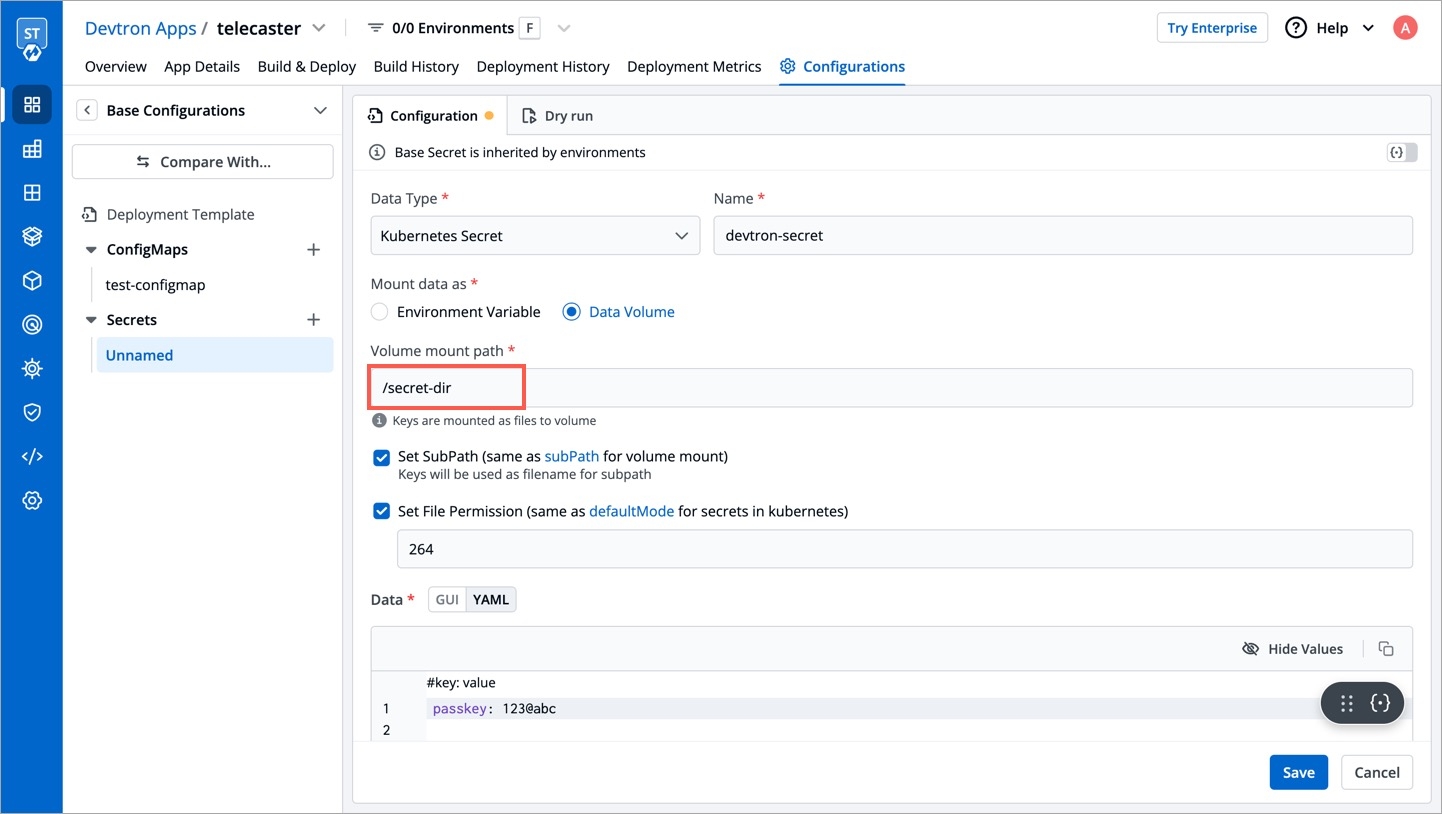

Specify the volume mount folder path in Volume Mount Path, a path where the data volume needs to be mounted. This volume will be accessible to the containers running in a pod.

For multiple files mount at the same location you need to check sub path bool field, it will use the file name (key) as sub path. Sub Path feature is not applicable in case of external configmap except AWS Secret Manager, AWS System Manager and Hashi Corp Vault, for these cases Name (Secret key) as sub path will be picked up automatically.

File permission will be provide at the configmap level not on the each key of the configmap. it will take 3 digit standard permission for the file.

Click on Save Secret to save the secret.

You can see the Secret is added.

You can update your secrets anytime later, but you cannot change the name of your secrets. If you want to change your name of secrets then you have to create a new secret.

To update secrets, click on the secret you wish to update.

Click on Update Secret to update your secret.

You can delete your secret. Click on your secret and click on the delete sign to delete your secret.

There are five Data types that you can use to save your secret.

Kubernetes Secret: The secret that you create using Devtron.

Kubernetes External Secret: The secret data of your application is fetched by Devtron externally. Then the Kubernetes External Secret is converted to Kubernetes Secret.

AWS Secret Manager: The secret data of your application is fetched from AWS Secret Manager and then converted to Kubernetes Secret from AWS Secret.

AWS System Manager: The secret data for your application is fetched from AWS System Secret Manager and all the secrets stored in AWS System Manager are converted to Kubernetes Secret.

Hashi Corp Vault: The secret data for your application is fetched from Hashi Corp Vault and the secrets stored in Hashi Corp Vault are converted to Kubernetes Secret.

Note: The conversion of secrets from various data types to Kubernetes Secrets is done within Devtron and irrespective of the data type, after conversion, the Pods access secrets normally.

In some cases, it may be that you already have secrets for your application on some other sources and you want to use that on devtron. External secrets are fetched by devtron externally and then converted to kubernetes secrets.

The secret that is already created and stored in the environment and being used by devtron externally is referred here as Kubernetes External Secret. For this option, devtron will not create any secret by itself but they can be used within the pods. Before adding secret from kubernetes external secret, please make sure that secret with the same name is present in the environment. To add secret from kubernetes external secret, follow the steps mentioned below:

Navigate to Secrets of the application.

Click on Add Secret to add a new secret.

Select Kubernetes External Secret from dropdown of Data type.

Provide a name to your secret. Devtron will search secret in the environment with the same name that you mention here.

Before adding any external secrets on devtron, kubernetes-external-secrets must be installed on the target cluster. Kubernetes External Secrets allows you to use external secret management systems (e.g., AWS Secrets Manager, Hashicorp Vault, etc) to securely add secrets in Kubernetes.

To install the chart with the release named my-release:

To install the chart with AWS IAM Roles for Service Accounts:

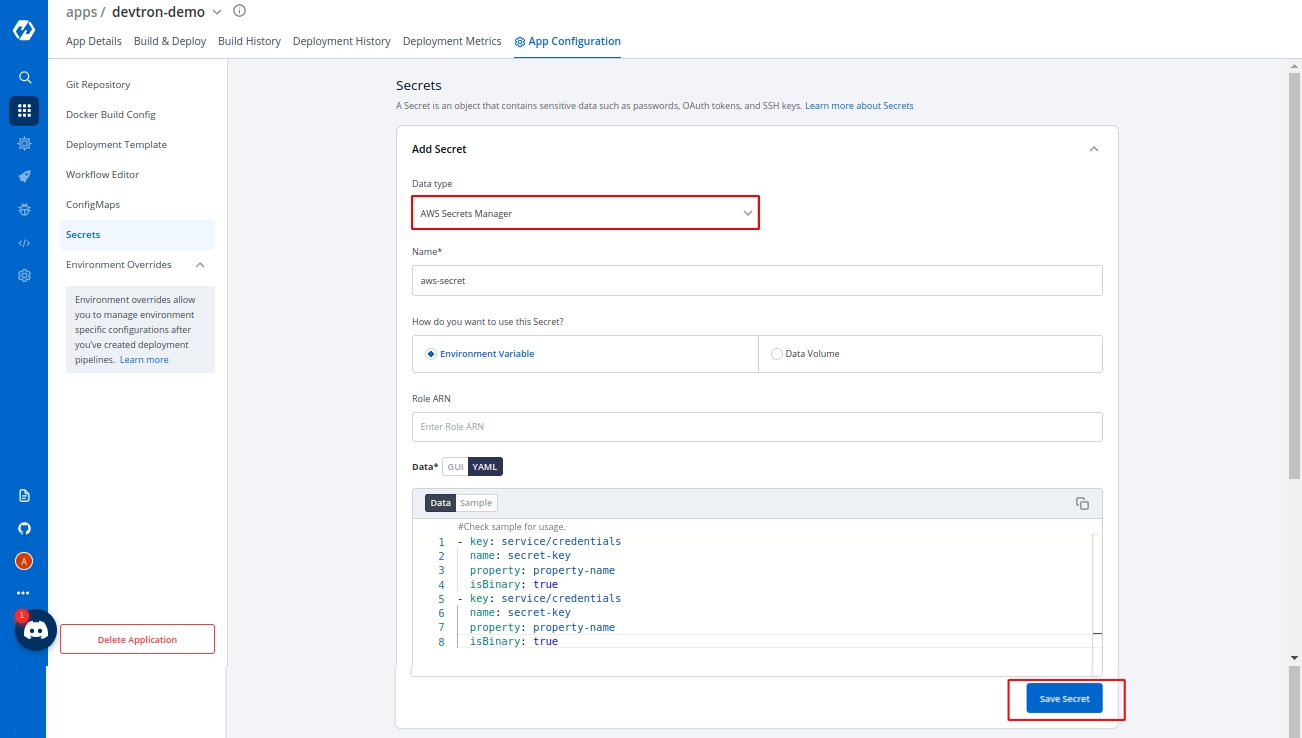

To add secrets from AWS secret manager, navigate to Secrets of the application and follow the steps mentioned below :

Click on Add Secret to add a new secret.

Select AWS Secret Manager from dropdown of Data type.

Provide a name to your secret.

Select how you want to use the secret. You may leave it selected as environment variable and also you may leave Role ARN empty.

In Data section, you will have to provide data in key-value format.

All the required field to pass your data to fetch secrets on devtron are described below :

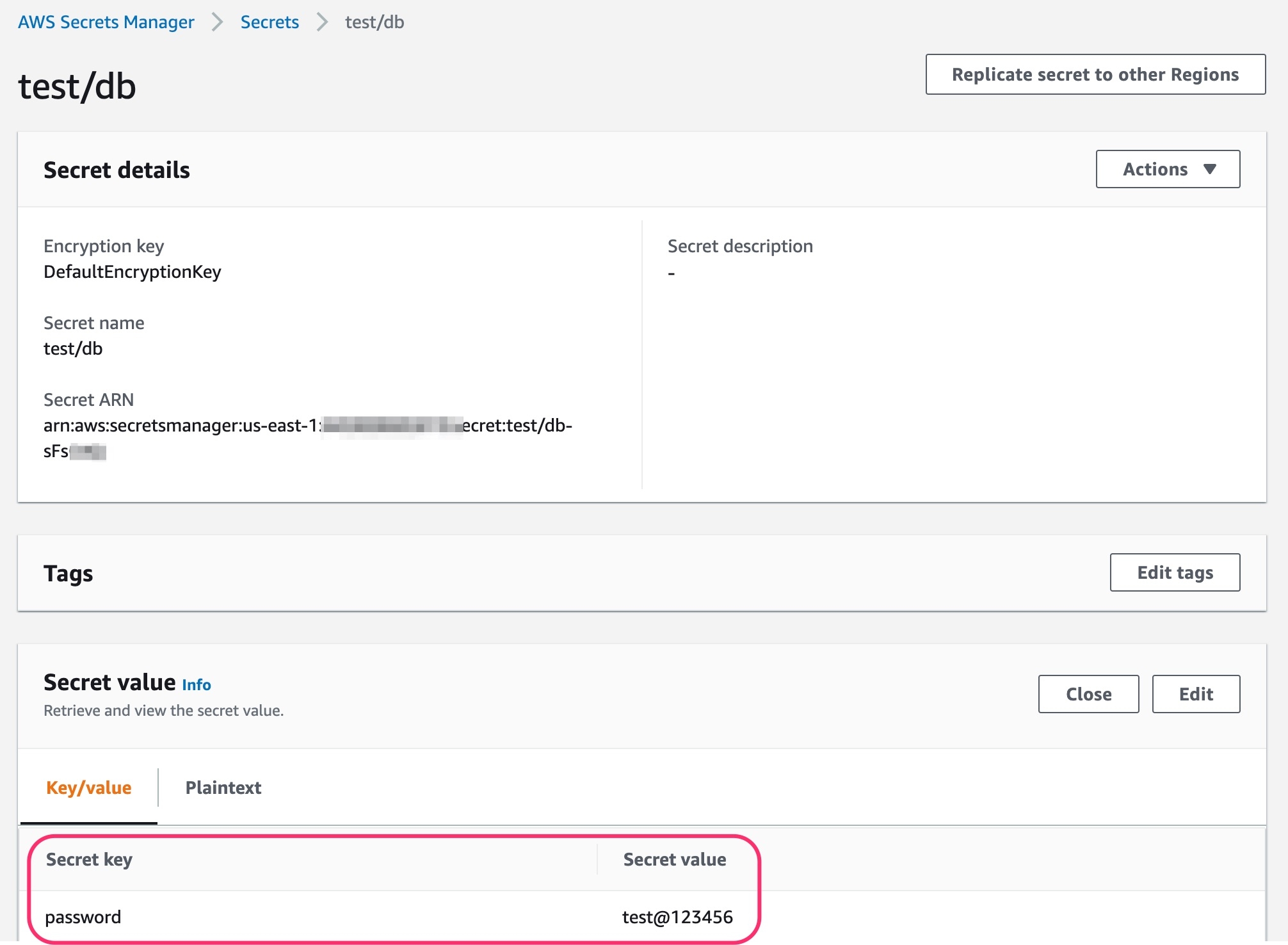

To add secrets in AWS secret manager, do the following steps :

Go to AWS secret manager console.

Click on Store a new secret.

Add and save your secret.

The Pre-Build and Post-Build stages allow you to create Pre/Post-Build CI tasks as explained .

Before you begin, for either GitHub or Bitbucket.

The "Pull Request" source type feature only works for the host GitHub or Bitbucket cloud for now. To request support for a different Git host, please create a github issue .

Devtron uses regexp library, view . You can test your custom regex from .

Before you begin, for either GitHub or Bitbucket.

|

The task type of the custom script may be a or a .

Trigger the

If you want to configure your ConfigMap and secrets at the application level then you can provide them in and , but if you want to have environment-specific ConfigMap and secrets then provide them under the Environment override Section. At the time of deployment, it will pick both of them and provide them inside your cluster.

[Note] It only works if Git Host is Github or Bitbucket Cloud as of now. In case you need support for any other Git Host, please create a .

Devtron uses regexp library, view . You can test your custom regex from .

Users can run the test case using the Devtron dashboard or by including the test cases in the devtron.ci.yaml file in the source git repository. For reference, check:

Author

Author of the PR

Source branch name

Branch from which the Pull Request is generated

Target branch name

Branch to which the Pull request will be merged

Title

Title of the Pull Request

State

State of the PR. Default is "open" and cannot be changed

Author